Author: geoff

the problem with windows

Now, before you start sending me hate mail because you think this posting is a Windows vs. Mac lecture, hold your horses. That’s NOT the kind of windows I’m talking about. This one’s about windowing functions and one (possibly unexpected) effect on the results of the analysis of the impulse response of an allpass filter. So, if you want to debate Windows vs. Mac – go somewhere else. If you think that you can get all riled up over a Blackman Harris window function, read on!

Last week I had to do some frequency-domain analysis of a system that had a small problem with noise in its impulse response measurements. The details of where the noise came from are unimportant. There is only one important thing from the back-story that you need to know – and that is that I was measuring the response of an allpass filter implementation.

So, I did my MLS measurement of the allpass filter and, because I had noise in the impulse response, I chose to use a windowing function to clean up the impulse response’s tail. Now, I know that, by using a windowing function (or a DFT, for that matter), there are consequences that one needs to be aware of. However, the consequence that I stumbled on was a new one for me – although in retrospect, it should not have been.

Here’s a sterilised version of what happened, just in case it’s of use.

Below is a plot showing a (very clean) impulse response of an allpass filter. To be more specific, it’s a 4th order Linkwitz Riley crossover with a crossover frequency of 100 Hz, where I summed the outputs of the high pass and low pass components together to make an output. (We will not discuss why I did it this way, since that information is outside the scope of this discussion.) In addition, I have plotted three windowing functions, a Hann, a Hamming and a Blackman Harris.

Note that the length of the windowing functions is big – 65536 samples to be exact. As you can see in the plot, the ringing of the allpass filter is negligible in this plot by the time we get to the end of the window. This can also be seen below in the next two plots where I’ve shown the impulse response after it has been windowed by the three (actually four, if we include rectangular as a function), scaled in linear and dB FS. (I know, I know, dB FS is an RMS measurement and I plotted this as instantaneous values – sue me.)

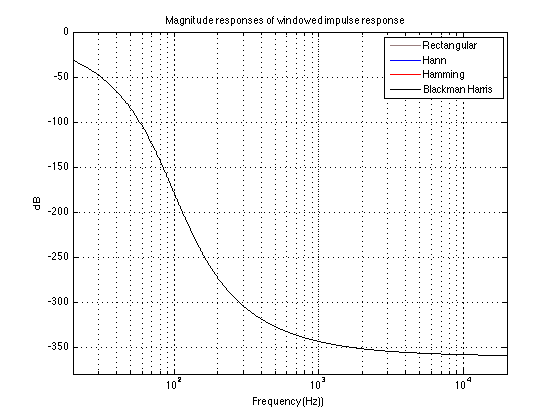

So, if you now take those windowed impulse responses and calculate their magnitude and phase responses, you get the plots shown below.

“So what?” I hear you cry. The magnitude responses of the four versions of the windowed impulse response are all identical enough that their plots lie on top of each other. This is also true for their phase responses. “I see what I would expect to see – what are you complaining about?” I hear you cry.

Well, let me tell you. The plots above show the results when you use a 65536-point FFT and a 65536-sample window (okay, okay, DFT – sue me).

Let’s do all that again, but with a 65536-point FFT and a 1024-point window instead (I did this in MATLAB, so it’s zero-padding the impulse responses with the remaining 65536-1024 = 64512 samples.)

Now we can see immediately, that the ringing in the allpass filter’s impulse response hasn’t settled down by the time we get to the end of the window. This can also be seen in the following two plots.

- The result of the windowing functions on the impulse response.

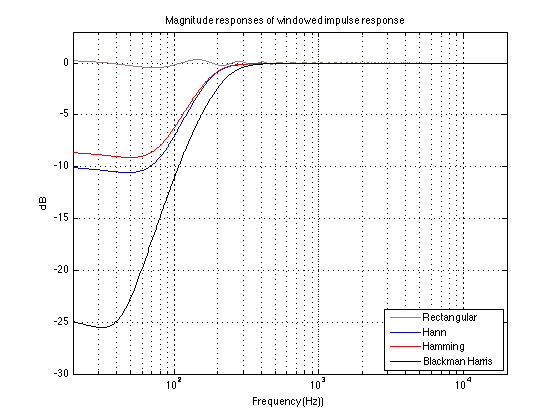

As you can see there, the impulse response itself (aka “Rectangular” windowing) is only about 60 dB below its peak when we reach the end of the window. How does this then affect our magnitude response?

As you can see there, the implications on the rectangular window is a ripple in the low end of the calculated magnitude response. As you can also see there, the result of attenuating the tail of the allpass filter’s impulse response before we unceremoniously cut it off is that we lose low-end in the magnitude response. The more we attenuate in the windowing function, the more low end we lose.

Of course, this also has implications on the phase response of the windowed impulse responses, as is shown below.

The moral of this story is not a new one: beware of the effects of a windowing function on your analysis.

In my personal case, it’s a memorable lesson, since I didn’t get to this conclusion immediately. This is because I was measuring the allpass with different Fc’s – and what I saw in my magnitude response was a shelving response (I was using a Blackman Harris window). When I changed the Fc of the allpass, the shelving response that I saw moved appropriately. So, my conclusion was that there was a problem in my filter that I was measuring. It took some time (too much time!) before I figured out (with the help of some more level-headed friends) that my problem was the window length and my windowing function, not the filter that I was measuring. Won’t make that mistake again for a while…

copy cats – but entertaining copy cats

https://www.youtube.com/watch?v=qybUFnY7Y8w

is cool but

came first

wish i had done one of those…

achieving distance and depth in stereo recordings – one man’s opinion

I had an interesting email from an old recording-engineer friend of mine this week regarding a debate he had with a student concerning the issue of “depth” in recordings (in his specific case, 2-channel stereo recordings done with an ORTF mic configuration). This got me thinking about to a bunch of thoughts I had once-upon-a-time about distance perception, and a newer bunch of thoughts about loudspeaker directivity. Now, those two bunches of thoughts are congealing into a single idea regarding how to achieve (and experience) a reasonable perceived sensation of distance and depth in 2-channel stereo.

To start, some definitions:

- When I say “stereo” I mean “2-channel sound recording”

- “Distance” to a source in a stereo recording is the perceived distance between the listener and the (probably phantom) image.

- “Depth” in a stereo recording is the difference in the perceived distances from the listener to the closest and farthest (probably phantom) images (i.e. the distance to the concert master vs. the distance to the xylophone in a symphony orchestra)

Go to an anechoic chamber with a loudspeaker and a friend. Sit there and close your eyes and get your friend to place the loudspeaker some distance from you. Keep your eyes closed, play some sounds out of the loudspeaker and try to estimate how far away it is. You will be wrong (unless you’re VERY lucky). Why? It’s because, in real life with real sources in real spaces, distance information (in other words, the information that tells you how far away a sound source is) comes mainly from the relationship between the direct sound and the early reflections. If you get the direct sound only, then you get no distance information. Add the early reflections and you can very easily tell how far away it is. This has been proven in lots of “official” listening tests. (For example, go check out this report as a basic starting point).

Anecdote #1: Back in the old days when I was working on my Ph.D. we had an 8-loudspeaker system in the lab – one speaker every 45° in a circle around the listening position. We were trying to build a multichannel room simulator where we were building a sound field, piece by piece – the direct sound and (up to 3rd-order) early reflections had the “correct” panning, delay and gain, and we added a diffuse field to tail in behind it. One of the interesting things that I found with that system was that the simulated distance to the source was easily to achieve with just the 1st-order reflections, but that the precision of that perceived distance was increased as we added 2nd- and 3rd-order reflections. (We didn’t have enough computing power to simulate higher-order reflections at the time. It would be interesting to go back and try again to see what would happen with higher-order stuff now that my Mac has gotten a little faster…) Another interesting thing (although, in retrospect, it shouldn’t surprise anyone) was that the location and the distance to the simulated sound source were also easy to determine without the direct sound being part of the sound field at all. Just the 1st- to 3rd-order reflections by themselves were enough to tell you where things were.

Anecdote #2: I did a recording for Atma once-upon-a-time in a large church in Montreal with a very long reverb time. During the sessions, I sat in the church (no control room), about 20 m from the mic pair. So, when I and the organist discussed what take to do next, we were talking live in the same room – no talkback speakers. During the editing for this disc, I happened to be shuttling around, looking for the beginning of a take – so I’d drop the cursor somewhere on the screen and hit “play” quickly to see where I was. One of the takes ended with the organist asking “did we get it?” and I responded “yup” quickly and loudly. It just so happened that, when I was shuttling around, looking for the right take, I hit “play” at the beginning of the “yup” and then quickly hit “stop”. The interesting thing is that it sounded, for that split second, like I was right next to the microphones – not 20 m away like I knew I was. So, I hit “play” again, and this time didn’t hit stop. This time, I sounded far away. What’s going on? Well, because the church was so big, it was possible to hit the stop button before any of the first reflections came in (save maybe the one off the floor), so it was possible (with a fast enough thumb on the transport buttons of the editing machine) to make the recording of my voice anechoic. The result was that I sounded 0 m away instead of 20 m.

The moral of the stories thus far? In order to deliver a perception of precise distance and depth (even if it’s not accurate…) you need early reflections in the recording, and they have to be panned and delayed appropriately.

Step 3: The delivery

Think back to Step 1. We agreed (or at least I said…) that early reflections tell your brain how far away the sound source is. Now think to a loudspeaker in a listening room.

Case #1: If you have an anechoic room, there are no early reflections, and, regardless of how far away the loudspeakers are, a sound source in the recording without early reflections (i.e. a close-mic’ed vocal) will sound much closer to you than the loudspeakers.

Case #2: If you have a listening room with early reflections, but the loudspeakers are directional such that there is no energy being delivered to the side walls (for example, a dipole with the angles carefully chosen to point the null of the loudspeaker at the point of specular reflection from the side wall), then the result is the same as in Case 1. This time there are no early reflections because of loudspeaker directivity instead of wall absorption, but the effect at the listening position is the same.

Case #3: If you have a listening room with early reflections, and the loudspeakers are omni-directional, then the early reflections from the side walls tell you how far away the loudspeakers are. Therefore, the close-mic’ed vocal track from Case #1 cannot sound any closer than the loudspeakers – your brain is too smart to be told otherwise.

The punchline

So, if you want to achieve precision in the distance and depth of your stereo recordings (whether you’re on the recording end or the playback end) you’re going to need to make sure that you have a reasonable mix of the following:

- Early reflections in the recording itself have to be there, and coming in at the right times with the right gains with the right panning

- Not much energy in the early reflections in your listening room – either by putting some absorption on the walls in the right places, or by having reasonably directional loudspeakers (or both).

some patents

you know you don’t live in the world’s culinary capital when…

how much is left?

seems unjust to me

on the state of inequality in america, joseph e. steiglitz writes”the six heirs to the wal-mart empire command wealth of $69.7 billion, which is equivalent to the wealth of the entire bottom 30 percent of u.s. society.”