Thanks to George Massenburg at GML Inc. (www.massenburg.com) for his kind permission to use include this chapter which was originally written as part of a manual for one of their products.

Once upon a time, in the days before audio was digital, when you made a long-distance phone call, there was an actual physical connection made between the wire running out of your phone and the phone at the other end. This caused a big problem in signal quality because a lot of high-frequency components of the signal would get attenuated along the way. Consequently, booster circuits were made to help make the relative levels of the various frequencies equal. As a result, these circuits became known as equalizers. Nowadays, of course, we don’t need to use equalizers to fix the quality of long-distance phone calls, but we do use them to customize the relative balance of various frequencies in an audio signal.

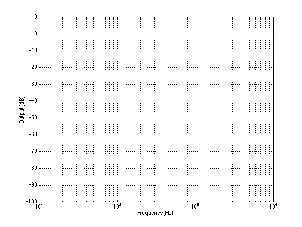

In order to look at equalizers and their smaller cousins, filters, we’re going to have to look at their frequency response curves. This is a description of how the output level of the circuit compares to the input for various frequencies. We assume that the input level is our reference, sitting at 0 dB and the output is compared to this, so if the signal is louder at the output, we get values greater than 0 dB. If it’s quieter at the output, then we get negative values at the output.

Before diving straight in and talking about how equalizers behave, we’ll start with the basics and look at four different types of filters. Just like a coffee filter keeps coffee grinds trapped while allowing coffee to flow through, an audio filter lets some frequencies pass through unaffected while reducing the level of others.

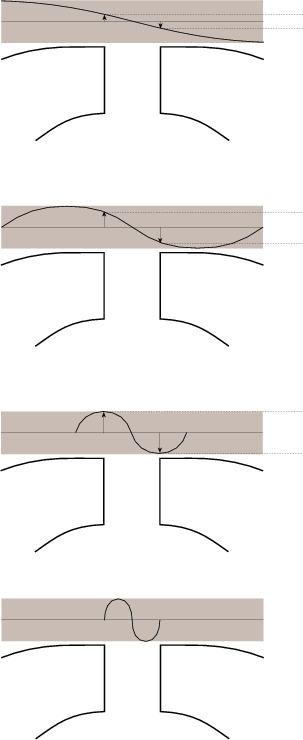

One of the conceptually simplest filters is known as a low-pass filter because it allows low frequencies to pass through it. The question, of course, is “how low is low?” The answer lies in a single frequency known as the cutoff frequency or fc. This is the frequency where the output of the filter is 3.01 dB lower than the maximum output for any frequency (although we normally round this off to -3 dB which is why it’s usually called the 3 dB down point). “What’s so special about -3 dB?” I hear you cry. This particular number is chosen because -3 dB is the level where the signal is at one half the power of a signal at 0 dB. So, if the filter has no additional gain incorporated into it, then the cutoff frequency is the one where the output is exactly one half the power of the input. (Which explains why some people call it the half-power point.)

As frequencies get higher and higher, they are attenuated more and more. This results in a slope in the frequency response graph which can be calculated by knowing the amount of extra attenuation for a given change in frequency. Typically, this slope is specified in decibels per octave. Since the higher we go, the more we attenuate in a low pass filter, this value will always be negative.

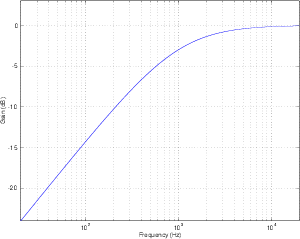

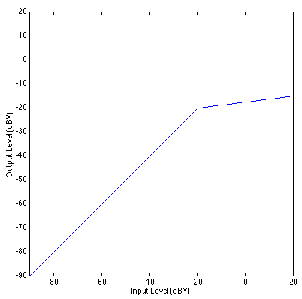

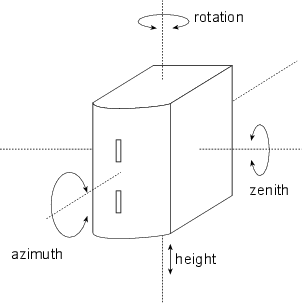

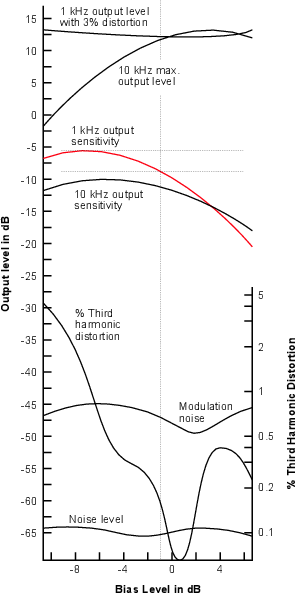

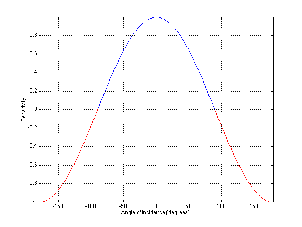

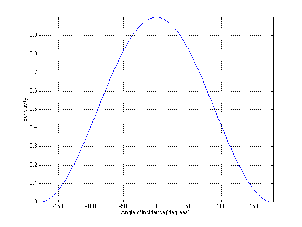

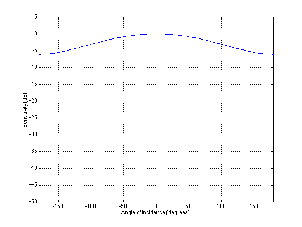

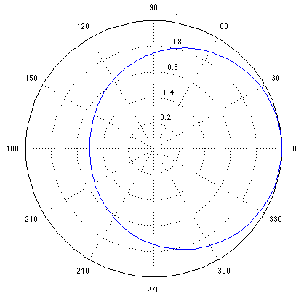

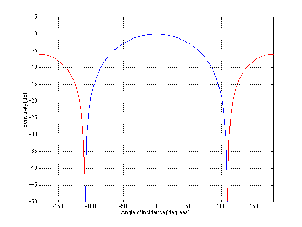

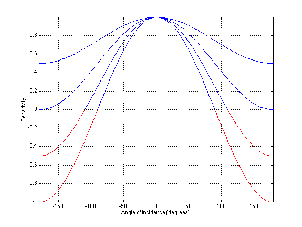

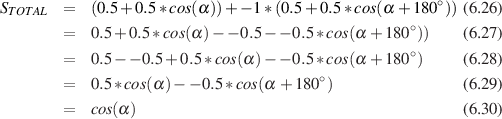

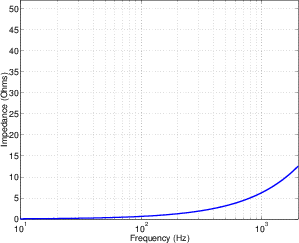

The slope of the filter is determined by its order. If we oversimplify just a little, a first-order low-pass filter will have a slope of -6.02 dB per octave above its cutoff frequency (usually rounded to -6 dB/oct). If we want to be technically correct about this, then we have to be a little more specific about where we finally reach this slope. Take a look at the frequency response plot in Figure 6.1. Notice that the graph has a nice gradual transition from a slope of 0 (a horizontal line) in the really low frequencies to a slope of -6 dB/oct in the really high frequencies. In the area around the cutoff frequency, however, the slope is changing. If we want to be really accurate, then we have to say that the slope of the frequency response is really 0 for frequencies less than one tenth of the cutoff frequency. In other words, for frequencies more than one decade below the cutoff frequency. Similarly, the slope of the frequency response is really -6.02 dB/oct for frequencies more than one decade above (ten times) the cutoff frequency.

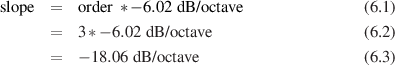

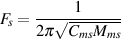

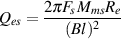

If we have a higher-order filter, the cutoff frequency is still the one where the output drops by 3 dB, however the slope changes to a value of -6.02n dB/oct, where n is the order of the filter. For example, if you have a 3rd-order filter, then the slope is

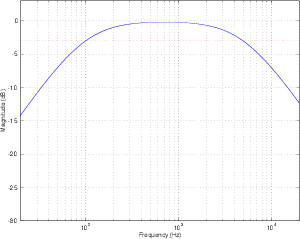

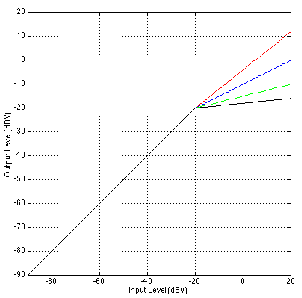

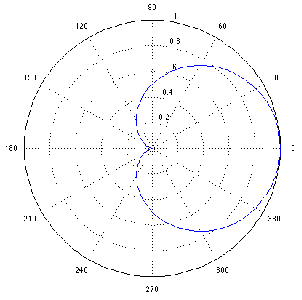

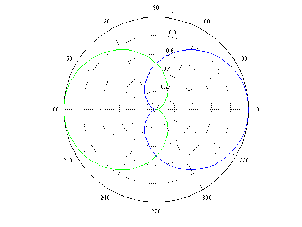

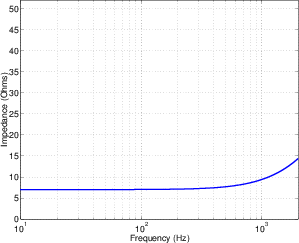

A high-pass filter is essentially exactly the same as a low-pass filter, however, it permits high frequencies to pass through while attenuating low frequencies as can be seen in Figure 6.2. Just like in the previous section, the cutoff frequency is where the output has a level of -3.01 dB but now the slope below the cutoff frequency is positive because we get louder as we increase in frequency. Just like the low-pass filter, the slope of the high-pass filter is dependent on the order of the filter and can be calculated using the equation 6.02n dB/oct, where n is the order of the filter.

Remember as well that the slope only applies to frequencies that are at least one decade away from the cutoff frequency.

Let’s take a signal and send it through a high-pass filter and a low-pass filter in series, so the output of one feeds into the input of the other. Let’s also assume for a moment that the two cutoff frequencies are more than a decade apart.

The result of this probably won’t hold any surprises. The high-pass filter will attenuate the low frequencies, allowing the higher frequencies to pass through. The low-pass filter will attenuate the high frequencies, allowing the lower frequencies to pass through. The result is that the high and low frequencies are attenuated, with a middle band (called the passband) that’s allowed to pass relatively unaffected.

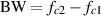

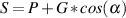

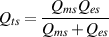

The system described in the previous section is called a bandpass filter and it has a couple of specifications that we should have a look at. The first is the width of the passband. This bandwidth is calculated using the difference two cutoff frequencies which we’ll label fc1 for the lower one and fc2 for the higher one. Consequently, the bandwidth is calculated using the equation:

| (6.4) |

So, using the example of the filter frequency response shown in Figure 6.3, the bandwidth is 5,000 Hz – 100 Hz = 4900 Hz.

We can also calculate the middle of the passband using these two frequencies. It’s not quite so simple as we’d like, however. Unfortunately, it’s not just the frequency that’s half-way between the low and high frequency cutoff’s. This is because frequency specifications don’t really correspond to the way we hear things. Humans don’t usually talk about frequency – they talk about pitches and notes. They say things like “Middle C” instead of “262 Hz.” They also say things like “one octave” or “one semitone” instead of things like “a bandwidth of 262 Hz.”

Consider that, if we play the A below Middle C on a well-tuned piano, we’ll hear a note with a fundamental of 220 Hz. The octave above that is 440 Hz and the octave above that is 880 Hz. This means that the bandwidth of the first of these two octaves is 220 Hz (it’s 440 Hz – 220 Hz), but the bandwidth of the second octave is 440 Hz (880 Hz – 440 Hz). Despite the fact that they have different bandwidths, we hear them each as one octave, and we hear the 440 Hz note as being half-way between the other two notes. So, how do we calculate this? We have to find what’s known as the geometric mean of the two frequencies. This can be found using the equation

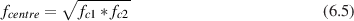

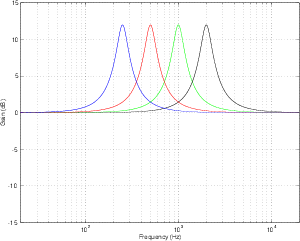

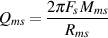

Let’s say that you want to build a bandpass filter with a bandwidth of one octave. This isn’t difficult if you know the centre frequency and if it’s never going to change. For example, if the centre frequency was 440 Hz, and the bandwidth was one octave wide, then the cutoff frequencies would be 311 Hz and 622 Hz (we won’t worry too much at the moment about how I arrived at these particular numbers). What happens if we leave the bandwidth the same at 311 Hz, but change the centre frequency to 880 Hz? The result is that the bandwidth is now no longer an octave wide – it’s one half of an octave. So, we have to link the bandwidth with the centre frequency so that we can describe it in terms of a fixed musical interval (for you engineers, a musical interval is a measure of the distance between two notes). This is done using what is known as the quality or Q of the filter, calculated using the equation:

Now, instead of talking about the bandwidth of the filter, we can use the Q which gives us an idea of the width of the filter in musical terms. This is because, as we increase the centre frequency, we have to increase the bandwidth proportionately to maintain the same Q. Notice however, that if we maintain a centre frequency, the smaller the bandwidth gets, the bigger the Q becomes, so if you’re used to talking in terms of musical intervals, you have to think backwards. The bigger the Q, the smaller the interval.

Remember that you can have a very high Q, and therefore a very narrow bandwidth for a bandpass filter. All of the definitions still hold, however. The cutoff frequencies are still the points where we’re 3 dB lower than the maximum value and the bandwidth is still the distance in Hertz between these two points and so on...

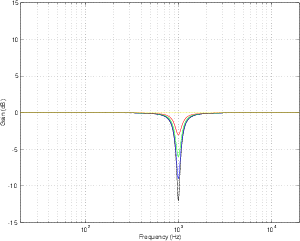

Although bandpass filters are very useful at accentuating a small band of frequencies while attenuating others, sometimes we want to do the opposite. What if we want to attenuate a small band of frequencies while leaving the rest alone? This can be accomplished using a band-reject filter (also known as a bandstop filter) which, as its name implies, rejects (or usually just attenuates) a band of frequencies without affecting the surrounding material. The frequency response of this can be seen in Figure 6.4.

The thing to be careful of when describing band-reject filters is the fact that cutoff frequencies are still defined as the points where we’ve dropped in level by 3 dB from the maximum output.

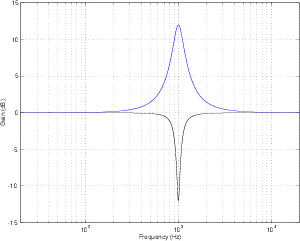

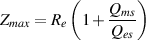

There is a special breed of band-reject filter that is designed to have almost infinite attenuation at a single frequency, leaving all others intact. This, of course is impossible, but we can come close. If we have a band-reject filter with a very high Q, the result is a frequency response like the one shown in Figure 6.5. The shape is basically a flat frequency response with a narrow, deep notch at one frequency – hence the name notch filter

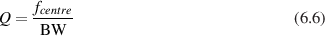

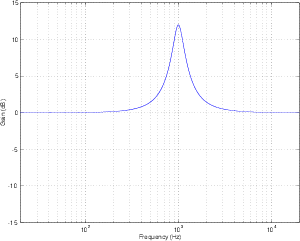

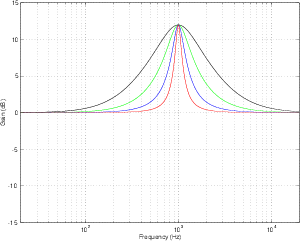

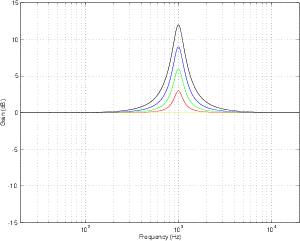

There is a variation on the bandpass filter that, instead of attenuating all frequencies outside the passband, the filter typically leaves them at a gain of 0 dB. This kind of filter can be seen in the plot of an in Figure 6.6 and is called a peaking filter or peak filter. Notice now that, rather than attenuating all unwanted frequencies, the filter can be thought of as simply applying a known gain in the passband. The further away you get from the passband, the less the signal is affected. Notice, however, that we still measure the bandwidth using the two points that are 3 dB down from the peak of the curve.

In most instances of these kinds of filters, it is also possible to attenuate the same frequency band, as is shown in Figure 6.10. Since the practical implementation of this filter allows you to boost and attenuate, they are commonly known of as peak/dip filters.

Unlike its counterpart from the days of long-distance phone calls, a modern equalizer is a device that is capable of attenuating and boosting frequencies according to the desire and expertise of the user. There are four basic types of equalizers, but we’ll have to talk about a couple of issues before getting into the nitty-gritty.

An equalizer typically consists of a collection of filters, each of which permits you to control one or more of three things: the gain, centre frequency and Q of the filter. There are some minor differences in these filters from the ones we discussed above, but we’ll sort that out before moving on. Also, the filters in the equalizer may be connected in parallel or in series, depending on the type of equalizer and the manufacturer.

To begin with, as we’ll see, a filter in an equalizer comes in two basic models, the peak/dip filter and the shelving filter which is a type of variation on the highpass and low pass filters.

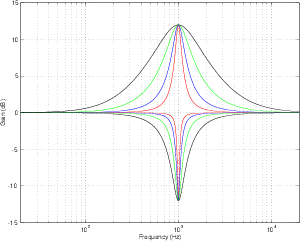

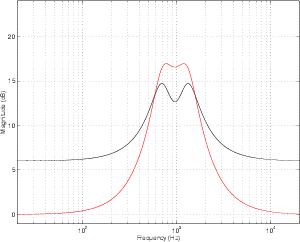

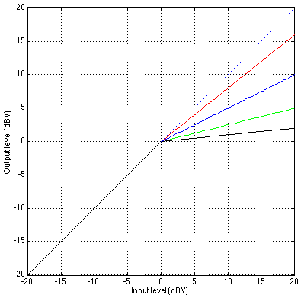

Let’s look at the frequency response of a filter with a centre frequency of 1 kHz, a Q of 4 and a two different amounts of boost or cut. If we plot these responses on the same graph, they look like Figure 6.11.

Notice that, although these two curves have “matching” parameters, they do not have the same shape. This is because the bandwidth (and therefore the Q) of a filter is measured using its 3 dB down point – not the point that’s 3 dB away from the peak or dip in the curve. Since the measurement is not symmetrical, the curves are not symmetrical. This is true of any filter where the Q is kept constant and gain is modified. If you compare a boost of any amount with a cut of the same amount, you’ll always get two different curves. This is what is known as a constant Q filter because the Q is kept as a constant. The result is called an asymmetrical filter (or non-symmetrical filter) because a matching boost and cut are not mirror images of each other.

There are advantages and disadvantages to this type of filter. The primary advantage is that you can have a very selective cut if you’re trying to eliminate a single frequency, simply by increasing the Q. The primary disadvantage is that you cannot undo what you have done. This last statement is explained in the following section.

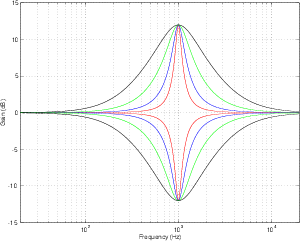

Instead of building a filter where the cut and boost always maintain a constant Q, let’s set about to build a filter that is symmetrical – that is to say that a matching boost and cut at the same centre frequency would result in the same shape. The nice thing about this design is that, if you take two such filters and connect them in series and set their parameters to be the same but opposite gains (for example, both with a centre frequency of 1 kHz and a Q of 2, but one has a boost of 6 dB and the other has a cut of 6 dB) then they’ll cancel each other out and your output will be identical to your input. This also applies if you’ve equalized something while recording – assuming that you live in a perfect world, if you remember your original settings on the recorded EQ curve, you can undo what you’ve done by duplicating the settings and inverting the gain. As a result, we call this a reciprocal peak/dip filterfilter, reciprocal peak/dip.

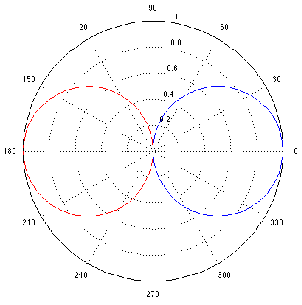

Let’s take two reciprocal peak/dip filters, each set with a Q of 2 and a gain of 6 dB. The only difference between them is that one has a centre frequency of 700 Hz and the other has a centre frequency of 1.3 kHz. If we use both of these filters on the same signal simultaneously, we can achieve two very different resulting frequency responses, depending on how they’re connected.

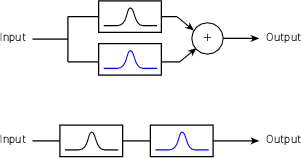

If the two filters are connected in series (it doesn’t matter what order we connect them in), then the frequency band that overlaps in the boosted portion of the two filters’ responses will be boosted twice. In other words, the signal goes through the first filter and is amplified, after which it goes through the second filter and the amplified signal is boosted further. This arrangement is also known as a circuit made of combining filters.

If we connect the two filters in parallel, however, a different situation occurs. Now each filter boosts the original signal independently, and the two resulting signals are added, producing a small increase in level, but not as significant as in the case of the series connection. This arrangement is also known as a circuit made of non-combining filters.

The primary advantage to having filters in connected in series rather than in parallel lies in possibility of increased gain or attenuation. For example, if you have two filters in series, each with a boost of 12 dB and with matched centre frequencies, the total resulting gain applied to the signal is 24 dB (because a gain of 12 dB from the second filter is applied to a signal that already has a gain of 12 dB from the first filter). If the same two filters were connected in parallel, the total maximum gain would be only 18 dB. (This is because a the addition of two identical signals results in a doubling of level which corresponds to an additional gain of only 6 dB. Note as well that the overall gain of a parallel connection is 6 dB.)

The main disadvantage to having filters connected in series rather than in parallel is the fact that you can occasionally result in frequency bands being boosted more than you’re intuitively aware. For example, looking at Figure 6.16, we can see that, based on the centre frequencies of the two filters, we would expect to have two narrow peaks in the total frequency response at 700 Hz and 1.3 kHz. The actual result, as can be seen, is a (sort of...) single broad peak between the two expected centre frequencies. Also, it should be noted that a group of non-combining filters will likely a ripple in their output frequency response.

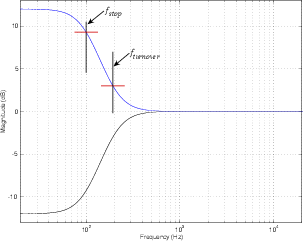

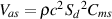

The nice thing about high pass and low pass filters is that you can reduce (or eliminate) things you don’t want (like low-frequency noise from air conditioners, for example.) But, what if you want to boost all your low frequencies instead of cutting all your high’s? This is when a shelving filter comes in handy. The response curve of shelving filters most closely resemble their high- and low-pass filter counterparts with a minor difference. As their name suggests, the curve of these filters level out at a specified frequency called the stop frequency. In addition, there is a second defining frequency called the turnover frequency which is the frequency at which the response is 3 dB above or below 0 dB. This is illustrated in Figure 6.17.

The transition ratio is sort of analogous to the order of the filter and is calculated using the turnover and stop frequencies as shown below.

where RT is the transition ratio.

The closer the transition ratio is to 1, the greater the slope of the transition in gain from the unaffected to the affected frequency ranges. This is because if RT = 1 then fstop = fturnover.

These filters are available as high- and low-frequency shelving units, boosting high and low frequencies respectively. In addition, they typically have a symmetrical response. If the transition ratio is less than 1, then the filter is a low shelving filter. If the transition ratio is greater than 1, then the filter is a high shelving filter.

The disadvantage of these components lies in their potential to boost frequencies above and below the audible audio range causing at the least wasted amplifier power and at the worst, loudspeaker damage. For example, if you use a high shelf filter with a stop frequency of 10 kHz to increase the level of the high end by 12 dB to brighten things up a bit, you will probably also wind up boosting signals above your hearing range. In a typical case, this may cause some unpredictable signals from your tweeter due to increased intermodulation distortion of signals you can’t even hear. To reduce these unwanted effects, super sonic and subsonic signals can be attenuated using a low pass or high pass filter respectively outside the audio band. Using a peaking filter at the appropriate frequency instead of a filter with a shelving response can avoid the problem altogether.

The most common application of this equalizer is the tone controls on home sound systems. These bass and treble controls generally have a maximum slope of 6 dB per octave and reciprocal characteristics. They are also frequently seen on equalizer modules on small mixing consoles.

Graphic equalizers are seen just about everywhere these days, primarily because they’re intuitive to use. In fact, they are probably the most-used piece of signal processing equipment in recording. The name “graphic equalizer” comes from the fact that the device is made up of a number of filters with centre frequencies that are regularly spaced, each with a slider used for gain control. The result is that the arrangement of the sliders gives a graphic representation of the frequency response of the equalizer. The most common frequency resolutions available are one-octave, two-third-octave and one-third-octave, although resolutions as fine as one-twelveth-octave exist. The sliders on most graphic equalizers use ISO standardized band center frequencies (See Section 12.1). They almost always employ reciprocal peak/dip filters wired in parallel. As a result, when two adjacent bands are boosted, there remains a comparatively large dip between the two peaks. This proves to be a great disadvantage when attempting to boost a frequency between two center frequencies. Drastically excessive amounts of boost may be required at the band centers in order to properly adjust the desired frequency. This problem is eliminated in graphic EQ’s using the much-less-common combining filters. In this system, the filter banks are wired in series, thus adjacent bands have a cumulative effect. Consequently, in order to boost a frequency between two center frequencies, the given filters need only be boosted a minimal amount to result in a higher-boosted mid-frequency.

Virtually all graphic equalizers have fixed frequencies and a fixed Q. This makes them simple to use and quick to adjust, however they are generally a compromise. Although quite suitable for general purposes, in situations where a specific frequency or bandwidth adjustment is required, they will prove to be inaccurate.

One attempt to overcome the limitations of the graphic equalizer is the paragraphic equalizer. This is a graphic equalizer with fine frequency adjustment on each slider. This gives the user the ability to sweep the center frequency of each filter somewhat, thus giving greater control over the frequency response of the system.

These equalizers are most commonly found on the input stages of mixing consoles. They are generally used where more control is required over the signal than is available with graphic equalizers, yet space limitations restrict the sheer number of potentiometers available. Typically, the equalizer section on a console input strip will have one or two sweep filters in addition to low and a high shelf filters with fixed turnover frequencies. The frequencies of the mid-range filters are usually reciprocal peak/dip filters with an adjustable (or sweepable) center frequencies and fixed Q’s.

The advantage of this configuration is a relatively versatile equalizer with a minimum of knobs, precisely what is needed on an overcrowded mixer panel. The obvious disadvantage is its lack of adjustment on the bandwidth, a problem that is solved with a parametric equalizer.

A parametric equalizer is one that allow the user to control the gain, centre frequency and Q of each filter. In addition, these three parameters are independent – that is to say that adjusting one of the parameters will have no effect on the other two. They are typically comprised of combining filters and will have either reciprocal peak/dip or constant-Q filters. (Check your manual to see which you have – it makes a huge difference!) In order to give the user a wider amount of control over the signal, the frequency ranges of the filters in a parametric equalizer typically overlap, making it possible to apply gain or attenuation to the same centre frequency using at least two filters.

The obvious advantage of using a parametric equalizer lies in the detail and versatility of control afforded by the user. This comes at a price, however – it unfortunately takes much time and practice to master the use of a parametric equalizer.

A less expensive variation on the true parametric equalizer is the semi-parametric equalizer or quasi-parametric equalizer. From the front panel, this device appears to be identical to its bigger cousin, however, there is a significant difference between the two. Whereas in a true parametric equalizer, the three parameters are independent, in a semi-parametric equalizer, they are not. As a result, changing the value of one parameter will cause at least one, if not both, of the other two parameters to change unexpectedly. As a result, although these devices are less expensive than a true parametric, they are less trustworthy and therefore less functional in real working situations.

| Category | Graphic | Parametric

| |||

| Control | Graphic | Paragraphic | Sweep | Semi-parametric | True Parametric |

| Gain | Y | Y | Y | Y | Y |

| Centre Frequency | N | Y | Y | Y | Y |

| Q | N | N | N | Y | Y |

| Shelving Filter? | N | N | Y | Optional | Optional |

| Combining / Non-combining | N | N | N | Depends | C |

| Reciprocal peak/dip or Constant Q | R p/d | R p/d | R p/d | Typically R p/d | Depends |

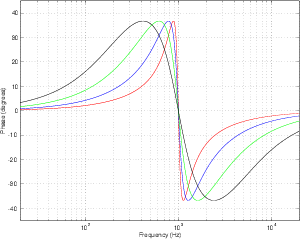

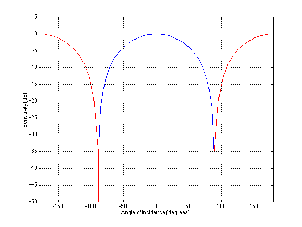

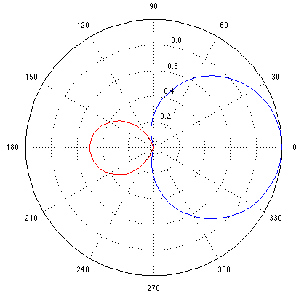

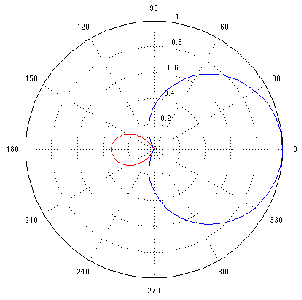

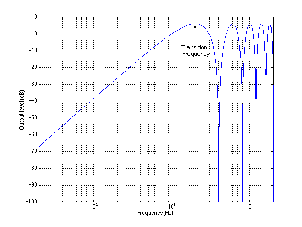

So far, we’ve only been looking at the frequency response of a filter or equalizer. In other words, we’ve been looking at what the magnitude of the output of the filter would be if we send sine tones through it. If the filter has a gain of 6 dB at a certain frequency, then if we feed it a sine tone at that frequency, then the amplitude of the output will be 2 times the amplitude of the input (because a gain of 2 is the same as an increase of 6 dB). What we haven’t looked at so far is any shift in phase (also known as phase distortion) that might be incurred by the filtering process. Any time there is a change in the frequency response in the signal, then there is an associated change in phase response that you may or may not want to worry about. That phase response is typically expressed as a shift (in degrees) for a given frequency. Positive phase shifts mean that the signal is delayed in phase whereas negative phase shifts indicate that the output is ahead of the input.

“The output is ahead of the input!?” I hear you cry. “How can the output be ahead of the input? Unless you’ve got one of those new digital filters that can see into the near future...” Well, it’s actually not as strange as it sounds. The thing to remember here is that we’re talking about a sine wave – so don’t think about using an equalizer to help your drummer get ahead of the beat... It doesn’t mean that the whole signal comes out earlier than it went in. This is because we’re not talking about negative delay – it’s negative phase.

While it’s true that a change in frequency response of a signal necessarily implies that there is a change in its phase, you don’t have to have the same phase shift for the same frequency response change. In fact, different manufacturers can build two filters with centre frequencies of 1 kHz, gains of 12 dB and Q’s of 4. Although the frequency responses of the two filters will be identical, their phase responses can be very different.

You may occasionally hear the term minimum phase to describe a filter. This is a filter that has the frequency response that you want, and incurs the smallest (hence “minimum”) shift in phase to achieve that frequency response.

Two things to remember about minimum phase filters: 1) Just because they have the minimum possible phase shift doesn’t necessarily imply that they sound the best. 2) A minimum phase filter can be “undone” – that is to say that if you put your signal through a minimum phase filter, it is possible to find a second minimum phase filter that will reverse all the effects of the first, giving you exactly the signal you started with.

If you plot the phase response of a filter for all frequencies, chances are you’ll get a smooth, fancy-looking curve like the ones in Figure 6.18. Some filters, on the other hand, have a phase response plot that’s a straight line if you graph the response on a linear frequency scale (instead of a log scale like we normally do...). This line usually slopes upwards so the higher the frequency, the bigger the phase change. In fact, this would be exactly the phase response of a straight delay line – the higher the frequency, the more of a phase shift that’s incurred by a fixed delay time. If the delay time is 0, then the straight line is a horizontal one at 0∘ for all frequencies.

Any filter whose phase response is a straight line is called a linear phase filter. Be careful not to jump to the conclusion that, because it’s a linear phase filter, it’s better than anything else. While there are situations where such a filter is useful, they don’t necessarily work well in all situations to correct all problems. Different intentions require different filter characteristics.

The phase response of a filter is typically strongly related to its Q. The higher the Q (and therefore the smaller the bandwidth) the greater the change in phase around the centre frequency. This can be seen in Figure 6.18 above. Notice that, the higher the Q, the higher the slope of the phase response at the centre frequency of the filter. When the slope of the phase response of a filter gets very steep (in other words, when the Q of the filter is very high) an interesting thing called ringing happens. This is an effect where the filter starts to oscillate at its centre frequency for a length of time after the input signal stops. The higher the Q, the longer the filter will ring, and therefore the more audible the effect will be. In the extreme cases, if the Q of the filter is 0, then there is no ringing (but the bandwidth is infinity and you have a flat frequency response – so it’s not a very useful filter...). If the Q of the filter is infinity, then the filter becomes a sine wave generator.

All this information is great – but why and how would you use an equalizer?

This is probably the most obvious use for an equalizer. You have a lead vocal that sounds too bright so you want to cut back the high frequency content. Or you want to bump up the low mid range of a piano to warm it up a bit. This is the primary intention of the tone controls on the cheapest ghetto blaster through to the best equalizer in the recording studio. It’s virtually impossible to give a list of “tips and tricks” in this category, because every instrument and every microphone in every recording situation will be different. There are time when you’ll want to use an equalizer to compensate for deficiencies in the signal because you couldn’t afford a better mic for that particular gig. On the other hand there may be occasions where you have the most expensive microphone in the world on a particular instrument and it still needs a little tweaking to fix it up. There are, however, a couple of good rules to follow when you’re in this game.

First of all – don’t forget that you can use an equalizer to cut as well as boost. Consider a situation where you have a signal that has too much bass – there are two possible ways to correct the problem. You could increase the mids and highs to balance, or you could turn down the bass. There are as many situations where one of these is the correct answer as there are situations where the other answer is more appropriate. Try both unless you’re in a really big hurry.

Second of all – don’t touch the equalizer before you’ve heard what you’re tweaking. I often notice when I go to a restaurant that there are a huge number of people who put salt and pepper on their meal before they’ve even tasted a single morsel. Doesn’t make much sense... Hand them a plate full of salt and they’ll still shake salt on it before raising a hand to touch their fork. The same goes for equalization. Equalize to fix a problem that you can hear – not because you found a great EQ curve that worked great on kick drum at the last session.

Thirdly – don’t overdo it. Or at least, overdo it to see how it sounds when it’s overdone, then bring it back. Again, back to a restaurant analogy – you know that you’re in a restaurant that knows how to cook steak when there’s a disclaimer on the menu that says something to the effect of “We are not responsible for steaks ordered well done.” Everything in moderation – unless, of course, you’re intending to plow straight through the fields of moderation and into the barn of excess.

Fourthly, there’s a number of general descriptions that indicate problems that can be fixed, or at least tamed with equalization. For example, when someone says that the sound is “muddy,” you could probably clean this up by reducing the area around 125 – 250 Hz with a low-Q filter. Table 6.2 gives a number of basic examples, but there are plenty more – ask around...

| Symptom description | Possible remedy |

| Bright | Reduce high frequency shelf |

| Dark, veiled, covered | Increase high frequency shelf |

| Harsh, crunchy | Reduce 3 – 5 kHz region |

| Muddy, thick | Reduce 125 – 250 Hz region |

| Lacks body or warmth | Increase 250 – 500 Hz |

| Hollow | Reduce 500 Hz region |

One last trick here applies when you hear a resonant frequency sticking out, and you want to get rid of it, but you just don’t know what the exact frequency is. You know that you need to use a filter to reduce a frequency – but finding it is going to be the problem. The trick is to search and destroy by making the problem worse. Set a filter to boost instead of cutting a frequency band with a fairly high Q. Then, sweep the frequency of the filter until the resonance sticks out more than it normally does. You can then fine tune the centre frequency of the filter so that the problem is as bad as you can make it, then turn the boost back to a cut.

Although we rarely like to admit it, we humans aren’t perfect. This is true in many respects, but for the purposes of this discussion, we’ll concentrate specifically on our abilities to hear things. Unfortunately, our ears don’t have the same frequency response at all listening levels. At very high listening levels, we have a relatively flat frequency response, but as the level drops, so does our sensitivity to high and low frequencies. (This effect was discussed in Section 5.4.) As a result, if you mix a tune at a very high listening level and then reduce the level, it will appear to lack low end and high end. Similarly, if you mix at a low level and turn it up, you’ll tend to hear more low end and high end.

One possible use for an equalizer is to compensate for the perceived lack of information in extreme frequency ranges at low listening levels. Essentially, when you turn down the monitor levels, you can use an equalizer to increase the levels of the low and high frequency content to compensate for deficiencies in the human hearing mechanism. This filtering is identical to that which is engaged when you press the “loudness” button on most home stereo systems. Of course, the danger with such equalization is that you don’t know what frequency ranges to alter, and how much to alter them – so it is not recommendable to do such compensation when you’re mixing, only when you’re at home listening to something that’s already been mixed.

It’s possible in some specific cases to use equalization to reduce noise in recordings, but you have to be aware of the damage that you’re inflicting on some other parts of the signal.

High-frequency Noise (Hiss)

Let’s say that you’ve got a recording of an electric bass on a really noisy analog tape deck. Since most of the perceivable noise is going to be high-frequency stuff and since most of the signal that you’re interested in is going to be low-frequency stuff, all you need to do is to roll off the high end to reduce the noise. Of course, this is be best of all possible worlds. It’s more likely that you’re going to be coping with a signal that has some high-frequency content (like your lead vocals, for example...) so if you start rolling off the high end too much, you start losing a lot of brightness and sparkle from your signal, possibly making the end result worse that you started. If you’re using equalization to reduce noise levels, don’t forget to occasionally hit the “bypass” switch of the equalizer once and a while to hear the original. You may find when you refresh your memory that you’ve gone a little too far in your attempts to make things better.

Low-frequency Noise (Rumble)

Almost every console in the world has a little button on every input strip that has a symbol that looks like a little ramp with the slope on the left. This is a high-pass filter that is typically a second-order filter with a cutoff frequency around 100 Hz or so, depending on the manufacturer and the year it was built. The reason that filter is there is to help the recording or sound reinforcement engineer get rid of low-frequency noise like “stage rumble” or microphone handling noise. In actual fact, this filter won’t eliminate all of your problems, but it will certainly reduce them. Remember that most signals don’t go below 100 Hz (this is about an octave and a half below middle C on a piano) so you probably don’t need everything that comes from the microphone in this frequency range – in fact, chances are, unless you’re recording pipe organ, electric bass or space shuttle launches, you won’t need nearly as much as you think below 100 Hz.

Hummmmmmm...

There are many reasons, forgivable and unforgivable, why you may wind up with an unwanted hum in your recording. Perhaps you work with a poorly-installed system. Perhaps your recording took place under a buzzing streetlamp. Whatever the reason, you get a single frequency (and perhaps a number of its harmonics) singing all the way through your recording. The nice thing about this situation is that, most of the time, the hum is at a predictable frequency (depending on where you live, it’s likely a multiple of either 50 Hz or 60 Hz) and that frequency never changes. Therefore, in order to reduce, or even eliminate this hum, you need a very narrow band-reject filter with a lot of attenuation. Just the sort of job for a notch filter. The drawback is that you also attenuate any of the music that happens to be at or very near the notch centre frequency, so you may have to reach a compromise between eliminating the hum and having too detrimental of an effect on your signal.

A dynamic equalizer is one which automatically changes its frequency response according to characteristics of the signal passing through it. You won’t find many single devices what fit this description, but you can create a system that behaves differently for different input signals if you add a compressor to the rack. This is easily accomplished today with digital multi-band compressors which have multiple compressors fed by what could be considered a crossover network similar to that used in loudspeakers.

Dynamic enhancement

Take your signal and, using filters, divide it into two bands with a crossover frequency at around 5 kHz. Compress the higher band using a fast attack and release time, and adjust the output level of the compressor so that when the signal is at a peak level, the output of the compressor summed with the lower frequency band results in a flat frequency response. When the signal level drops, the low frequency band will be reduced more than the high frequency band and a form of high-frequency enhancement will result.

Dynamic Presence

In order to add a sensation of “presence” to the signal, use the technique described in Section 6.1.5 but compress the frequency band in the 2 kHz to 5 kHz range instead of all high frequencies.

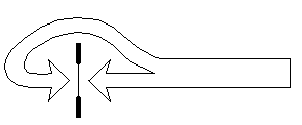

There are many instances where a close-mic technique is used to record a narrator and the result is a signal that emphasizes the sibilant material in the signal – in particular the “s” sound. Since the problem is due to an excess of high frequency, one option to fix the issue could be to simply roll off high frequency content using a low-pass filter or a high-frequency shelf. However, this will have the effect of dulling all other material in the speech, removing not only the “s’s” but all brightness in the signal. The goal, therefore, is to reduce the gain of the signal when the letter “s” is spoken. This can be accomplished using an equalizer and a compressor with a side chain. In this case, the input signal is routed to the inputs of the equalizer and the compressor in parallel. The equalizer is set to boost high frequencies (thus making the “s’s” even louder...) and its output is fed to the side chain input of the compressor. The compression parameters are then set so that the signal is not normally compressed, however, when the “s” is spoken, the higher output level from the equalizer in the side chain triggers compression on the signal. The output of the compressor has therefore been “de-essed” or reduced in sibilance.

Although it seems counterintuitive, don’t forget that, in order to reduce the level of the high frequencies in the output of the compressor, you have to increase the level of the high frequencies at the output of the equalizer in this case.

Pop-reduction

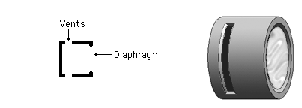

A similar problem to de-essing is the “pop” that occurs when a singer’s plosive sounds (p’s and b’s) cause a thump at the diaphragm of the microphone. There is a resulting overload in the low frequency component of the signal that can be eliminated using the same technique described in Section 6.1.5 where the low frequencies (250 Hz and below) are boosted in the equalizer instead of the high frequency components.

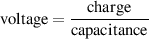

We saw above that the quality factor, or Q, is defined by the centre frequency and the bandwidth of the filter. That bandwidth is defined using the two cutoff frequencies of the filter’s response, which are, in turn, defined using a -3 dB point. So, to find the cutoff frequencies, you find the peak in the filter’s response, and then go 3 dB below that and find the frequencies that intersect that level.

However, this results in some strange effects as we saw above. If you have a peaking filter, then your cutoff frequencies are 3 dB below the peak – the maximum effect in your gain. However, if you have a notch filter, then your -3 dB point is measured down from the part of the response that is unaffected. This is why a constant-Q peak/dip filter is asymmetrical. If you want to make a reciprocal peak/dip filter, you have to change your definition a little so that, if you’re applying a dip, then you measure the bandwidth using the points that at 3 dB up from the bottom of the dip, so we’re not really following the definition of bandwidth (or Q) properly.

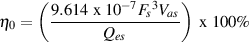

Another problem arises when you have a peak gain that is less than 3 dB. Let’s say that you use a reciprocal peak/dip filter to apply a gain of 2 dB. This means that no point in the response is 3 dB lower than the peak, so it therefore has no definable bandwidth or Q? Hmmmmm.....

There have been some suggested solutions to these problems that have become commonplace in the gear that we use every day. As a result, if you’re really picky about what you’re doing, you should be aware of the variations on Q.

As we saw above, if the Q and bandwidth are always defined by the -3 dB point, then you result in a constant Q behaviour in a peak/dip filter, and therefore an asymmetrical behaviour.

The simplest solution to this problem of asymmetry is to re-define bandwidth when you have a dip – therefore using the 3 dB up points instead.

However, both this method, and the Constant Q method suffer from the problem of what the bandwidth and Q are when the gain is greater than -3 dB and less than 3 dB (since you can’t find a point in a magnitude response that’s 3 dB down when the highest peak is less than 3 dB up...).

One solution that was offered [Moorer, 1983] to get around the problem of gains below 6 dB was to re-define the bandwidth so that, whenever the peak gain is less than 6 dB, you use the gain value that is half of the peak value. For example, if the gain is 6 dB, then you define your bandwidth using the point 3 dB down from that (half of 6 is 3). If the gain is 4 dB, then you define bandwidth using the 2 dB-down (relative to the peak) point.

The nice thing about this definition is that, by using half of the gain to define the bandwidth (and therefore the Q) you automatically get a reciprocal (and therefore symmetrical) shape for peaks and dips.

Finally, along came a guy named Robert Bristow-Johnson who suggested that, since using the half-gain point to define Q was so useful (particularly in making reciprocal filters) then we should use it all the time[Bristow-Johnson, 1994].

There are two catches here. The first is that he suggested that we use this new definition not only for peak/dip filters, but shelving filters as well. The second is that he put a set of equations on Usenet (a precursor to the World Wide Web - where they live today at http://www.musicdsp.org/files/Audio-EQ-Cookbook.txt) that are used to implement the filters. Since those equations were freely available, everyone (well, almost everyone) uses them when they’re building the equalisers that you use in the gear that you buy.

Well... the big question here is whether or not you care that these differences exist. Maybe not. Personally, I do... however, it’s for a very good reason. If I implement an EQ curve on one piece of gear and write down my parameters (type, centre or cutoff frequency, gain and Q) I expect to be able to put those parameters in another piece of gear and get exactly the same response out. This won’t happen if the people who made your equalisers use different definitions of Q. This might be very bad... How bad? Well, let’s take a look...

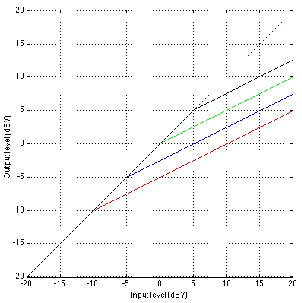

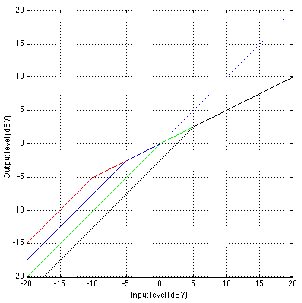

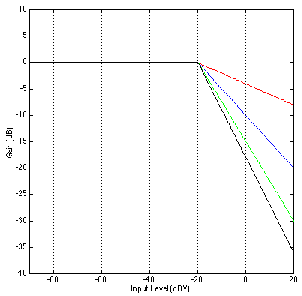

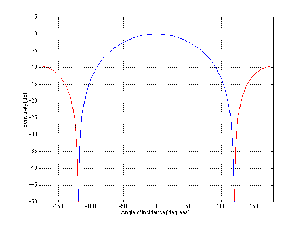

Changes in gain

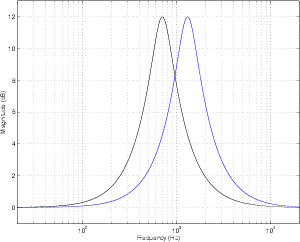

Figures 6.21 and 6.22 shows the difference in gain and phase respectively between two filters, both with an fc of 1 kHz and a Q of 2 and three different gains. However, one filter calculates Q based on the 3 dB down point, the other based on the midpoint of the maximum gain.

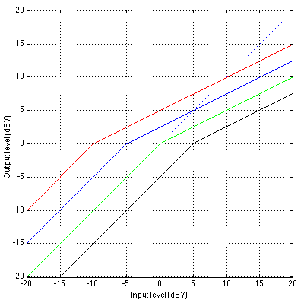

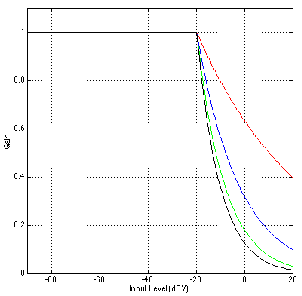

Changes in Q

Figures 6.23 and 6.24 shows the difference in gain and phase respectively between two filters, both with an fc of 1 kHz and a gain of 12 dB and five different Q’s. Again, one filter calculates Q based on the 3 dB down point, the other based on the midpoint of the maximum gain.

What is a filter? – from Julius O. Smith’s substantial website.

So you’re out for a drive in your car, listening to some classical music played by an orchestra on your car’s CD player. The piece starts off very quietly, so you turn up the volume because you really love this part of the piece and you want to hear it over the noise of your engine. Then, as the music goes on, it gets louder and louder because that’s what the composer wanted. The problem is that you’ve got the stereo turned up to hear the quiet sections, so these new loud sections are really loud – so you turn down your stereo. Then, the piece gets quiet again, so you turn up the stereo to compensate.

What you are doing is to manipulate something called the “dynamic range” of the piece. In this case, the dynamic range is the difference in level between the softest and the loudest parts of the piece (assuming that you’re not mucking about with the volume knob). By fiddling with your stereo, you’re making the soft sounds louder and the loud sounds softer, and therefore compressing the dynamics. The music still appears to have quiet sections and loud sections, but they’re not as different as they were without your fiddling.

In essence, this is what a compressor does – at the most basic level, it makes loud sounds softer and soft sounds louder so that the music going through it has a smaller (or compressed) dynamic range. Of course, I’m oversimplifying, but we’ll straighten that out.

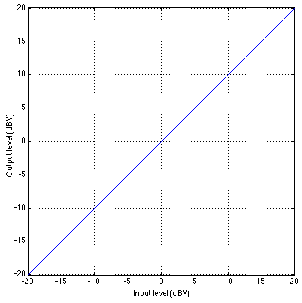

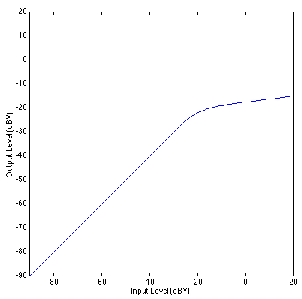

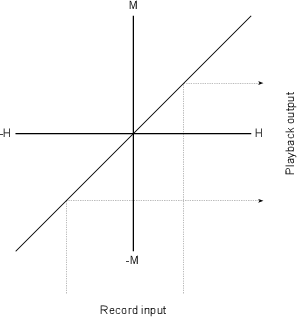

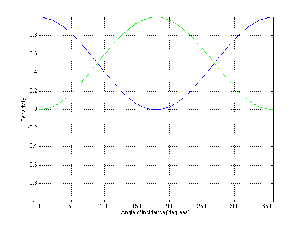

Let’s look at the gain response of an ideal piece of wire. This can be shown as a transfer function as seen in Figure 6.25.

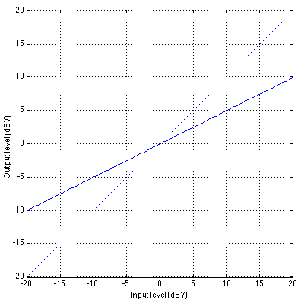

Now, let’s look at the gain response for a simple device that behaves as an oversimplified compressor. Let’s say that, for a sine wave coming in at 0 dBV (1 Vrms, remember?) the device has a gain of 1 (or output=input). Let’s also say that, for every 2 dB increase in level at the input, the gain of this device is reduced by 1 dB – so, if the input level goes up by 2 dB, the output only goes up by 1 dB (because it’s been reduced by 1 dB, right?) Also, if the level at the input goes down by 2 dB, the gain of the device comes up by 1 dB, so a 2 dB drop in level at the input only results in a 1 dB drop in level at the output. This generally makes the soft sounds louder than when they went in, the loud sounds softer than when they went in, and anything at 0 dBV come out at exactly the same level as it goes in.

If we compare the change in level at the input to the change in level at the output, we have a comparison between the original dynamic range and the new one. This comparison is expressed as a ratio of the change in input level in decibels to change in output level in decibels. So, if the output level goes up 1 dB for every 2 dB increase in level at the input, then we have a 2:1 compression ratio. The higher the compression ratio, the more the dynamic range is reduced.

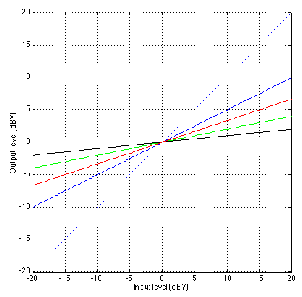

Notice in Figure 6.26 that there is one input level (in this case, 0 dBV) that results in a gain of 1 – that is to say that the output is equal to the input. That input level is known as the rotation point of the compressor. The reason for this name isn’t immediately obvious in Figure 6.26, but if we take a look at a number of different compression ratios plotted on the same graph as in Figure 3, then the reason becomes clear.

Normally, a compressor doesn’t really behave in the way that’s seen in any of the above diagrams. If we go back to thinking about listening to the stereo in the car, we actually leave the volume knob alone most of the time, and only turn it down during the really loud parts. This is the way we want the compressor to behave. We’d like to leave the gain at one level (let’s say, at 1) for most of the program material, but if things get really loud, we’ll start turning down the gain to avoid letting things get out of hand. The gain response of such a device is shown in Figure 6.28.

The level where we change from being a linear gain device (meaning that the gain of the device is the same for all input levels) to being a compressor is called the threshold. Below the threshold, the device applies the same gain to all signal levels. Above the threhold, the device changes its gain according to the input level. This sudden bend in the transfer function at the threshold is called the knee in the response.

In the case of the plot shown in Figure 6.28, the rotation point of the compressor is the same as the threshold. This is not necessarily the case, however. If we look at Figure 6.29, we can see an example of a curve where this is illustrated.

This device applies a gain of 5 dB to all signals below the threshold, so an input level of -20 dBV results in an output of -15 dBV and an input at -10 dBV results in an output of -5 dBV. Notice that the threshold is still at 0 dBV (because it is the input level over which the device changes its behaviour). However, now the rotation point is at 10 dBV.

Let’s look at an example of a compressor with a gain of 1 below threshold, a threshold at 0 dBV and different compression ratios. The various curves for such a device are shown in Figure 6.30. Notice that, below the threshold, there is no difference in any of the curves. Above the threshold, however, the various compression ratios result in very different behaviours.

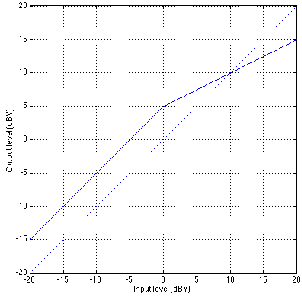

There are two basic “styles” in compressor design when it comes to the threshold. Some manufacturers like to give the user control over the threshold level itself, allowing them to change the level at which the compressor “kicks in.” This type of compressor typically has a unity gain below threshold, although this isn’t always the case. Take a look at Figure 6.31. This shows a number of curves for a device with a compression ratio of 2:1, unity gain below threshold and an adjustable threshold level.

The advantage of this design is that the bulk of the signal, which is typically below the threshold, remains unchanged – by changing the threshold level, we’re simply changing the level at which we start compressing. This makes the device fairly intuitive to use, but not necessarily a good design for the final sound quality.

Let’s think about the response of this device (with a 2:1 compression ratio). If the threshold is turned up to 12 dBV, then any signal coming in that’s less than 12 dBV will go out unchanged. If the input signal has a level of 20 dBV, then the output will be 16 dBV, because the input went 8 dB above threshold and the compression ratio is 2:1, so the output goes up 4 dB.

If the threshold is turned down to -12 dBV, then any signal coming in that’s less than -12 dBV will go out unchanged. If the input signal has a level of 20 dBV, then the output will be 4 dBV, because the input went 32 dB above threshold and the compression ratio is 2:1, so the output goes up 16 dB.

So what? Well, as you can see from Figure 6.31, changing the compression ratio will affect the output level of the loud stuff by an amount that’s determined by the relationship between the threshold and the compression ratio.

Consider for a moment how a compressor will be used in a recording situation: we use the compressor to reduce the dynamic range of the louder parts of the signal. As a result, we can increase the overall level of the output of the compressor before going to tape. This is because the spikes in the signal are less scary and we can therefore get closer to the maximum input level of the recording device. As a result, when we compress, we typically have a tendency to increase the input level of the device that follows the compressor. Don’t forget, however, that the compressor itself is adding noise to the signal, so when we boost the input of the next device in the audio chain, we’re increasing not only the noise of the signal itself, but the noise of the compressor as well. How can we reduce or eliminate this problem? Use compressor design philosophy number 2...

Instead of giving the user control over the threshold, some compressor designers opt to have a fixed threshold and a variable gain before compression. This has a slightly different effect on the signal.

Let’s look at the implications of this configuration using the response in Figure 6.32 which has a fixed threshold of 0 dBV. If we look at the green curve with a gain of 0 dB, then signals coming in are not amplified or attenuated before hitting the threshold detector. Therefore, signals lower than 0 dBV at the input will be unaffected by the device (because they aren’t being compressed and the gain is 0 dB). Signals greater than 0 dBV will be compressed at a 2:1 compression ratio.

Now, let’s look at the blue curve. The low-level signals have a constant 5 dB gain applied to them – therefore a signal coming in a -20 dBV comes out at -15 dBV. An input level of -15 dBV results in an output of -10 dBV. If the input level is -5 dBV, a gain of 5 dB is applied and the result of the signal hitting the threshold detector is 0 dBV – the level of the threshold. Signals above this -5 dBV level (at the input) will be compressed.

If we just consider things in the theoretical world, applying a 5 dB gain before compression (with a threshold fixed at 0 dBV) results in the same signal that we’d get if we didn’t change the gain before compression, reduced the threshold to -5 dBV and then raised the output gain of the compressor by 5 dB. In the practical world, however, we are reducing our noise level by applying the gain before compression, since we aren’t amplifying the noise of the compressor itself.

There’s at least one manufacturer that takes this idea one step further. Let’s say that you have the output of a compressor being sent to the input of a recording device. If the compressor has a variable threshold and you’re looking at the record levels, then the more you turn down the threshold, the lower the signal going into the recording device gets. This can be seen by looking at the graph in Figure 6.31 comparing the output levels of an input signal with a level of 20 dBV. Therefore, the more we turn down the threshold on the compressor, the more we’re going to turn up the input level on the recorder.

Take the same situation but use a compressor with a variable gain before compression. In this case, the more we turn up the gain before compression, the higher the output is going to get. Now, if we turn up the gain before compression, we are going to turn down the input level to the recorder to make sure that things don’t get out of hand.

What would be nice is to have a system where all this gain compensation is done for you. So, using the example of a compressor with gain before compression: we turn up the gain before compression by some amount, but at the same time, the compressor turns down its output to make sure that the compressed part of the signal doesn’t get any louder. In the case where the compression ratio is 2:1, if we turn up the gain before compression by 10 dB, then the output has to be turned down by 5 dB to make this happen. The output attenuation in dB is equal to the gain before compression (in dB) divided by the compression ratio.

What would this response look like? It’s shown in Figure 6.33. As you can see, changes in the gain before compression are compensated so that the output for a signal above the threshold is always the same, so we don’t have to fiddle with the input level of the next device in the chain.

If we were to do the same thing using a compressor with a variable threshold, then we’d have to boost the signal at the output, thus increasing the apparent noise floor of the compressor and making it sound as bad as it is...

As you can see from Figure 6.33, the advantage of this system is that adjustments in the gain before compression (or the threshold) don’t have any affect on how the loud stuff behaves – if you’re past the threshold, you get the same output for the same input.

So far we’ve been looking at the relationship between the output level and the input level of a compressor. Let’s look at this relationship in a different way by considering the gain of the compressor for various signals.

Figure 6.34 shows the level of the output of a compressor with a given threshold and compression ratio. As we would expect, below the threshold, the output is the same as the input, therefore the gain for input signals with a level of less than -20 dBV in this case is 0 dB – unity gain. For signals above this threshold, the higher the level gets the more the compressor reduces the gain – in fact, in this case, for every 8 dB increase in the input level, the output increases by only 1 dB, therefore the compressor reduces its gain by 7 dB for every 8 dB increase. If we look at this gain vs. the input level, we have a response that is shown in Figure 6.35.

Notice that Figure 6.35 plots the gain in decibels vs. the input level in dBV. The result of this comparison is that the gain reduction above the threshold appears to be a linear change with an increase in level. This response could be plotted somewhat differently as is shown in Figure 6.36.

You’ll now notice that there is a rather dramatic change in gain just above the threshold for signals that increase in level just a bit. The result of this is an audible gain change for signals that hover around the threshold – an artifact called pumping This is an issue that we’ll deal with a little later.

Let’s now consider this same issue for a number of different compression ratios. Figures 6.37, 6.38 and 6.39 show the relationships of 4 different compression ratios with the same thresholds and gains before compression to give you an idea of the change in the gain of the compressor for various ratios.

There is a simple solution to the problem of the pumping caused by the sudden change in gain when the signal level crosses the threshold. Since the problem is caused by the fact that the gain change is sudden because the knee in the response curve is a sharp corner, the solution is to soften the sharp corner into a gradual bend. This response is called a soft knee for obvious reasons as can be seen in Figure 6.40.

So far, we’ve been looking at a device that alters its gain according to the input level, but we’ve been talking in terms of the input level being measured in dBV – therefore, we’re thinking of the signal level in VRMS. In fact, there are two types of level detection available – compressors can either respond to the RMS value of the input signal, or the peak value of the input signal. In fact, some compressors give you the option of selecting some combination of the two instead of just selecting one or the other.

Probably the simplest signal detection method is the RMS option. As we’ll see later, the signal that is input to the device goes to two circuits: one is the circuit that changes the gain of the signal and sends it out the output of the device. The second, known as the control path determines the RMS level of the signal and outputs a control signal that changes the gain of the first circuit. In this case, the speed at which the control circuit can respond to changes in level depends on the time constant of the RMS detector built into it. For more info on time constants of RMS measurements, see Section 2.1.6. The thing to remember is that an RMS measurement is an average of the signal over a given period of time, therefore the detection system needs a little time to respond to the change in the signal. Also, remember that if the time constant of the RMS detection is long, then a short, high level transient will get through the system without it even knowing that it happened.

If you’d like your compressor to respond a little more quickly to the changes in signal level, you can typically choose to have it determine its gain based on the peak level of the signal rather than the RMS value. In reality, the compressor is not continuously looking at the instantaneous level of the voltage at the input – it’s usually got a circuit built in that looks at a smoothed version of the absolute value of the signal. Almost all compressors these days give you the option to switch between a peak and an RMS detection circuit.

On high-end units, you can have your detection circuit respond to some mix of the simultaneous peak and RMS values of the input level. Remember from Chapter 2.1.6 that the ratio of the peak to the RMS is called the crest factor. This ratio of peak/RMS can either be written as a value from 0 to something big, or it may be converted into a dB scale. Remember that, if the crest factor is near 0 (or -∞ dB), then the RMS value is much greater than the peak value and therefore the compressor is responding to the RMS of the signal level. If the crest factor is a big number, then the compressor is responding to the peak value of the input level.

Now that we’re talking about the RMS and the smoothed peak of the signal, we have to start considering what time it is. Up to now, we’ve been only looking at the output level or the gain of the compressor based on a static input level. We have been assuming that the only thing we’re sending through the unit is a steady-state sine tone. Of course, this is pretty boring to listen to, but if we’re going to look at real-world signals, then the behaviour of the compressor gets pretty complicated.

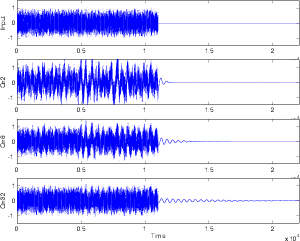

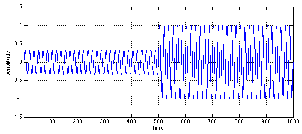

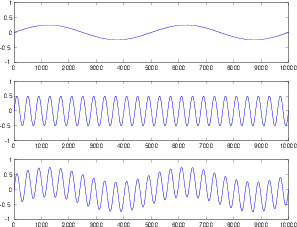

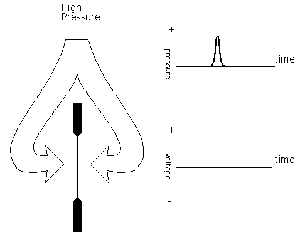

Let’s start by considering a signal that’s quiet to begin with and suddenly gets louder. For the purposes of this discussion, we’ll simulate this with a pulse-modulated sine wave like the one shown in Figure 6.41.

Unfortunately, a real-world compressor cannot respond instantaneously to this sudden change in level. In order to be able to do this, the unit would have to be able to see into the future to know what the new peak value of the signal will be before we actually hit that peak. (In fact, some digital compressors can do this by delaying the signal and turning the present into the past and the future into the present, but we’ll pretend that this isn’t happening for now...).

Let’s say that we have a compressor with a gain before compression of 0 dB and a threshold that’s set to a level that’s higher than the lower-level signal in Figure 6.41, but lower than the higher-level signal. So, the first part of the signal, the quiet part, won’t be compressed and the later, louder part will. Therefore the compressor will have to have a gain of 1 (or 0 dB) for the quiet signal and then a reduced gain for the louder signal.

Since the compressor can’t see into the future, it will respond somewhat slowly to the sudden change in level. In fact, most compressors allow you to control the speed with which the gain change happens. This is called the attack time of the compressor. Looking at Figure 6.42, we can see that the compressor has a sudden awareness of the new level (at Time = 500) but it then settles gradually to the new gain for the higher signal level. This raises a question – the gain starts changing at a known time, but, as you can see in Figure 6.42, it approaches the final gain forever without really reaching it. The question that’s raised is “what is the time of the attack time?” In other words, if I say that the compressor has an attack time of 200 ms, then what is the relationship between that amount of time and the gain applied by the compressor. The answer to this question is found in the chapter on capacitors. Remember that, in a simple RC circuit, the capacitor charges to a new voltage level at a rate determined by the time constant which is the product of the resistance and the capacitance. After 1 time constant, the capacitor has charged to 63 % of the voltage being applied to the circuit. After 5 time constants, the capacitor has charged to over 99 % of the voltage, and we consider it to have reached its destination. The same numbers apply to compressors. In the case of an attack time of 200 ms, then after 200 ms has passed, the gain of the compressor will be at 63 % of the final gain level. After 5 times the attack time (in this case, 1 second) we can consider the device to have reached its final gain level. (In fact, it never reaches it, it just gets closer and closer and closer forever...)

What is the result of the attack time on the output of the compressor? This actually is pretty interesting. Take a look at Figure 6.43 showing the output of a compressor that has the signal in Figure 6.41 sent into it and responding with the gain in Figure 6.42. Notice that the lower-level signal goes out exactly as it went it. We would expect this because the gain of the compressor for that portion of the signal is 1. Then the signal suddenly increases to a new level. Since the compressor detection circuit take a little while to figure out that the signal has gotten louder, the initial new loud signal gets through, almost unchanged. As we get further and further into the new level in time, however, the gain settles to the new value and the signal is compressed as we would expect. The interesting thing to note here is that a portion of the high-level signal gets through the compressor. The result is that we’ve created a signal that sounds like more of a transient than the input. This is somewhat contrary to the way most people tend to think that a compressor behaves. The common belief is that a compressor will control all of your high-level signals, thus reducing your dynamic range – but this is not exactly the case as we can see in this example. In fact, it may be possible that the perceived dynamic range is greater than the original because of the accents on the transient material in the signal.

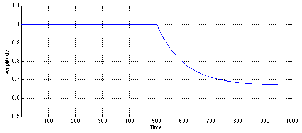

Similarly, what happens when the signals decreases in level from one that is being compressed to one that is lower than the threshold? Again, it takes some time for the compressor’s detection circuit to realize that the level has changed and therefore responds slowly to fast changes. This response time is called the release time of the compressor. (Note that the release time is measured in the same way as the attack time – it’s the amount of time it takes the compressor to get to 63% of its intended gain.)

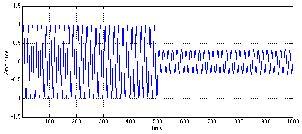

For example, we’ll assume that the signal in Figure 6.44 is being fed into a compressor. We’ll also assume that the higher-level signal is above the compression threshold and the lower-level signal is lower than the threshold.

This signal will result in a gain reduction for the first part of the signal and no gain reduction for the latter part, however, the release time of the compressor results in a transition time from these two states as is shown in Figure 6.45.

Again, the result of this gain response curve is somewhat interesting. The output of the compressor will start with a gain-reduced version of the louder signal. When the signal drops to the lower level, however, the compressor is still reducing the gain for a while, therefore we wind up with a compressed signal that’s below the threshold – a signal that normally wouldn’t be compressed. As the compressor figures out that the signal has dropped, it releases its gain to return to a unity gain, resulting in an output signal shown in Figure 6.46.

Just for comparison purposes, Figures 6.47 and 6.48 show a number of different attack and release times.

One last thing to discuss is a small issue in low-cost RMS-based compressors. In these machines, the attack and release times of the compressor are determined by the time constant of the RMS detection circuit. Therefore, the attack and release times are identical (normally, we call them “symmetrical”) and not adjustable. Check your manual to see if, by going into RMS mode, you’re defeating the attack time and release time controls.

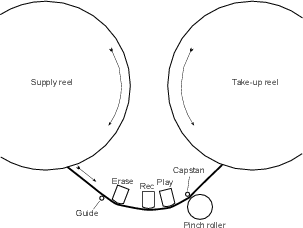

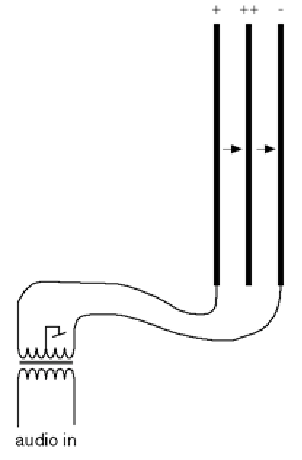

On its simplest level, a compressor can be thought of as a device which controls its gain based on the incoming signal. In order to do this, it takes the incoming audio and sends it in two directions, along the audio path, which is where the signal goes in, gets modified and comes out the output; and the control path, (also known as a side chain) where the signal comes in, gets analysed and converted into a different signal which is used to control the gain of the audio path.

As a result, we can think of a basic compressor as is shown in the block diagram in Figure 6.49.

If we’re going to start asking difficult questions about compressors, a good place to start is to look at the the behaviour of the level detection component of the side chain. Exactly what kind of level is the level detection measuring (or at least, claiming to measure...), how accurate is that measurement, and how well does it behave?

There are a number of possible answers to the first part of the question. Depending on the compressor, you might have one with an RMS detector, a peak detector (probably with an RC-based attack and release control) or a pseudo-RMS detector. Let’s look at how each of these behaves, how they behave differently, and how neither of them will give you what you expect or want...

Let’s start by looking at a very simple signal. We will make a stepped signal that goes from 0 V 1 to 0.5 V to 1 V, back down to 0.5 V and returning to (almost) 0 V. This input signal is shown as the green line in Figures 6.50 to 6.57.

RMS Detectors

Figures 6.50 and 6.51 show the response of a running RMS measurement of the signal. There are at least four things to notice here. Firstly, when the input voltage changes, the RMS measurement is always a little late getting to the new value. (To be precise, the amount of time it takes to get to the new voltage is equal to the RMS time window.) Secondly, the shapes of the attack and the decay of the RMS detector output are symmetrical. Thirdly, there are two discontinuities in the slope of the RMS detector output signal on each change of input voltage. (In other words, there are two “corners” in the black line for every jump in the green line.) Fourthly, because this is a DC signal (between changes, at least...) the RMS output is equal to the input after the detector has settled. This would not be true if the input voltage was changing faster than one RMS time constant apart. If that were the case, then the RMS detector would not have time to get to the new input voltage before it had to go somewhere else. Later, we’ll see that this is a problem with more musical signals like sine waves.

Pseudo RMS Detectors

Some manufacturers don’t like implementing true RMS detection in their compressors because it’s expensive. If you’re working with analogue gear, it means you have to put in more parts. If you’re building a digital compressor, then you need more memory. Either way, if you can get a nearly-RMS behaviour without the added expense, you might try to get away with this. One easy way to do this is to keep the R and the S and throw away the M in the RMS. In other words, you Square the input signal, then low-pass filter the result with a first-order RC filter (instead of finding the Mean value), and get the square Root of the result. If you’re reasonably careful about your RC time constant (in other words, the cutoff frequency of your low-pass filter), then you can get something that behaves something like an RMS detector without the expense.

However, what you get will not be exactly like an RMS detector as we can see in Figures 6.52 and 6.53.

Note that there is a small difference in the ramp-up behaviour as compared to a true-RMS detector. Specifically, there is only one discontinuity in the signal’s slope (only one corner in the black line). Other than that, it appears to be almost the same shape. However, the ramp-down behaviour is very different. If you look at the shape of the curve in Figure 6.52 compared to Figure 6.50, you can see that they have sort of an opposite behaviour in time. Where the RMS detector has a an increasingly vertical slope as it goes through its decay, the pseudo-RMS detector starts with a nearly-vertical slope and becomes more and more horizontal at it reaches its target value. This difference is even more evident when you take a look at the same curves displayed on a decibel scale. (see Figures 6.51 and 6.53.) The RMS detector’s output has an increasingly vertical decay when measured in decibels (therefore the rate of change in dB increases over time) whereas the pseudo-RMS detector’s output has a constant slope in dB over time. (This means that the decay of the pseudo-RMS detector’s output is more like the decay of a musical instrument or a reverb tail.)

Peak Detector with Symmetrical RC-based Attack and Release

In other cases, a compressor will be based on a peak detection circuit which is typically followed by a first-order RC circuit to control the attack and release times. In this case, the absolute value of the incoming signal is low-pass filtered by the first-order low-pass filter. Usually there are two different filters - one for attack and the other for the release. However, we’ll look at a system with a single low-pass first. Figures 6.54 and 6.55 show the output of a peak detector with a single RC filter used to smooth its output.

Note that the behaviour of this circuit is very similar to that of the pseudo-RMS detection circuit. In fact, if you don’t look carefully, you’ll think that the two curves are identical. However, they’re not – looking carefully, you’ll see that the response of the peak detector with the symmetrical RC low-pass is faster than that of the pseudo-RMS detector. This is particularly noticeable in the decibel scale representations of the responses in Figures 6.55 and 6.53. This is due to the fact that, in the case of the pseudo-RMS detector, the RC circuit is applied to the square of the signal (analogous to the signal’s power) whereas, in the case of the peak detector, the RC circuit is applied to the absolute value of the signal. This means, that, in the case of the pseudo-RMS detector, we’re seeing the square root of the response of the RC filter, whereas in the case of the peak detector, we see the unaltered response of the RC filter.

Peak Detector with Asymmetrical Attack and Release

Of course, it is more normal with a peak compressor to have separate attack and release time controls. While this will typically be implemented in almost exactly the same way as the previously-described circuit with a symmetrical attack and release, the usual setting of fast-attack-with-a-slow-release may result in an effect of this circuit to be seen more often. Figures 6.56 and 6.57 show a peak detector with separate RC smoothing filters for the attack and the release. The attack filter (when the input signal is increasing) is set to have a time constant of 0.2 ms, identical to the symmetrical filter shown above. The release filter (when the input signal is decreasing) is set to have a time constant of 100 times the attack. Note that these values should not be taken as suggestions – they are merely arbitrary values chosen to illustrate the behaviour of the detection circuit.

As can be seen in Figures 6.56 and 6.57, the attack of the detection circuit is identical to the symmetrical behaviour shown in Figures 6.54 and 6.55. This is because the two circuits are, in fact identical. However, as is immediately obvious in both Figure 6.56 and Figure 6.57, the release takes much longer to decay. This should not come as a surprise. However, the interesting thing to note is the change in slope at 35 ms. This is caused by the fact that the target value (the input voltage) changes at 35 ms, before the decay from the change at 25 ms has settled.

Sine waves

Let’s now move away from a simple input signal (the stepped DC used above) to something a little more complicated like a sine wave. This makes the detectors a little more busy, since they’re always trying to catch up to an ever-changing input level. Now the behaviour of the detectors’ outputs will be highly dependent on the relationship between the input signal’s period and the time constant of the detectors’ smoothing filters.

When the period of the input signal is much longer than the detector’s time constant, the level detector will have an output that is very similar to the absolute value of the input signal. This can be seen in the example in Figure 6.58. As can be seen in this example, nearly all of the detectors’ outputs are equivalent, the exception being the Peak Detector with the asymmetrical attack and release, since its release time constant is 20 ms – two times the period of the incoming signal. In the other three cases, it can be seen that the output of the detectors swings back and forth from nearly 0 V up to a value very close to the peak value of the input signal. We can think of this deviation as a ripple in the outputs of the detectors. So, we can say that, when the period of the input signal is much greater than the time constant of the detector’s smoothing filter, the peak-to-peak ripple on the output of the detector is close to the peak amplitude of the input signal.

In addition, you can see that the outputs of all level detectors is slightly behind the input signal – some more than others.