Everything, everywhere is made of molecules, which in turn are made of atoms (except in the case of the elements in which the molecules are atoms). Atoms can be thought of as being made of two things, electrons that orbit a nucleus. The nucleus is made of protons and neutrons. Each of these three particles (the electrons, neutrons and protons) has a specific charge or electrical capacity. (Apparently, charge is a difficult thing to define. One way to think of it is that charge is the electrical equivalent of magnetism - it is the ability of a sub-atomic particle (for example, an electron) to be attracted to (or repelled by) another sub-atomic particle. Since atoms are made of sub-atomic particles, and everything is made of atoms, then some materials (like balloons) are able to hold a charge and be attracted to other materials (like ceilings).) Electrons have a negative charge, protons have a positive charge, and neutrons have no charge. As a result, the electrons, which orbit around the nucleus like planets around the sun, don’t go flying off into the next atom because their negative charge attracts to the positive charge of the protons. Just as gravity ensures that the planets keep orbiting around the sun and don’t go flying off into space, charge keeps the electrons orbiting the nucleus.

There is a slight difference between the orbits of the planets and the orbits of the electrons. In the case of the solar system, every planet maintains a unique orbit – each being a different distance from the sun, forming roughly concentric ellipses from Mercury out to Pluto1 (or sometimes Neptune). In an atom, the electrons group together into what are called valence shells. A valence shell is much like an orbit that is shared by a number of electrons. Different valence shells have different numbers of electrons that, together, all add up to the total number in the atom. Different atoms have a different number of electrons, depending on the substance. This number can be found up in a periodic table of the elements, a simple version of which is shown in Figure 2.1. For example, the element hydrogen, which is number 1 on the periodic table, has 1 electron in each of its atoms; copper, on the other hand, is number 29 and therefore has 29 electrons.

Each valence shell likes to have a specific number of electrons in it to be stable. The inside shell is “full” when it has 2 electrons. The number of electrons required in each shell outside that one is a little complicated but is well-explained in any high-school chemistry textbook.

Let’s look at a diagram of two atoms. As can be seen in the helium atom in Figure 2.2, all of the valence shells are full, the copper atom, on the other hand, has just one lonely electron in its outermost shell. This difference between the two atom structures give the two substances very different characteristics.

In the case of the helium atom, since all the valence shells are full, the atom is very stable. The nucleus holds on to its electrons very tightly and will not let go without a great deal of persuasion, nor will it accept any new stray electrons. The copper atom, in comparison, has weakly-held electron that can be nudged out of place. The questions are, how does one “nudge” an electron, and where does it go when released? The answers are rather simple: we push the electron out of the atom with another electron from an adjacent atom. The new electron takes its place and the now-free particle moves to the next atom to push out its electron.

So essentially, if we have a wire made of a long string of copper atoms, and we add some electrons to one end of it, and give the electrons on the other end somewhere to go, then we can have a flow of particles through the metal.

I used to have a slight plumbing problem in my kitchen. I had two sinks, side by side, one for washing dishes and one for putting the washed dishes in to dry. The drain of the two sinks fed into a single drain which has built up some rust inside over the years (I lived in an old building).

When I filled up one sink with water and pulled the plug, the water goes down the drain, but can’t get down through the bottom drain as quickly as it should, so the result is that my second sink fills up with water coming up through its drain from the other sink.

Why does this happen? Well – the first answer is “gravity” – but there’s more to it than that. Think of the two sinks as two tanks joined by a pipe at their bottoms. We’ll put different amounts of water in each sink.

The water in the sink on the left weighs a lot – you can prove this by trying to lift the tank. So, the water is pushing down on the tank – but we also have to consider that the water at the top of the tank is pushing down on the water at the bottom. Thus there is more water pressure at the bottom than the top. Think of it as the molecules being squeezed together at the bottom of the tank – a result of the weight of all the water above it. Since there’s more water in the tank on the left, there is more pressure at the bottom of the left tank than there is in the right tank.

Now consider the pipe. On the left end, we have the water pressure trying to push the water through the pipe, on the right end, we also have pressure pushing against the water, but less so than on the left. The result is that the water flows through the pipe from left to right. This continues until the pressure at both ends of the pipe is the same – or, we have the same water level in each tank.

We also have to think about how much water flows through the pipe in a given amount of time. If the difference in water pressure between the two ends is quite high, then the water will flow quite quickly though the pipe. If the difference in pressure is small, then only a small amount of water will flow. Thus the flow of the water (the volume which passes a point in the pipe per amount of time) is proportional on the pressure difference. If the pressure difference goes up, then the flow goes up.

The same can be said of electricity, or the flow of electrons through a wire. If we connect two “tanks” of electrons, one at either end of the wire, and one “tank” has more electrons (or more pressure) than the other, then the electrons will flow through the wire, bumping from atom to atom, until the two tanks reach the same level. Normally we call the two tanks a battery. Batteries have two terminals – one is the opening to a tank full of too many electrons (the negative terminal – because electrons are negative) and the other the opening to a tank with too few electrons (the positive terminal). If we connect a wire between the two terminals (don’t try this at home!) then the surplus electrons at the negative terminal will flow through to the positive terminal until the two terminals have the same number of electrons in them. The number of surplus electrons in the tank determines the “pressure” or voltage (abbreviated V and measured in volts) being put on the terminal. (Note: once upon a time, people used to call this electromotive force or EMF but as knowledge increases from generation to generation, so does laziness, apparently... So, most people today call it voltage instead of EMF. Never one to go against the mob, I’ll do the same.) The more electrons, the more voltage, or electrical pressure. The flow of electrons in the wire is called current (abbreviated I and measured in amperes or amps) and is actually a specific number of electrons passing a point in the wire every second (6.24150948?1018 or 6,241,509,480,000,000,000 to be precise – possibly even accurate...)2 . (Note: some people call this “amperage” – but it’s not common enough to be a standard... yet...) If we increase the voltage (pressure) difference between the two ends of the wire, then the current (flow) will increase, just as the water in our pipe between the two tanks.

There’s one important point to remember when you’re talking about current. Due to a bad guess on the part of Benjamin Franklin, current flows in the opposite direction to the electrons in the wire, so while the electrons are flowing from the negative to the positive terminal, the current is flowing from positive to negative. This system is called conventional current theory. There are some books out there that follow the flow of electrons – and therefore say that current flows from negative to positive. It really doesn’t matter which system you’re using, so long as you know which is which.

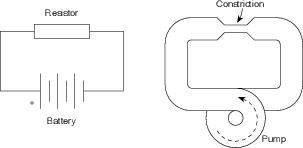

Let’s now replace the two tanks by a pump with pipe connecting its output to its input – that way, we won’t run out of water. When the pump is turned on, it decreases the water pressure at its input in order to increase the pressure at its output. The water in the pipe doesn’t enjoy having different pressures at different points in the same pipe so it tries to equalize by moving some water molecules out of the high pressure area and into the low pressure area. This creates water flow through the pipe, and the process continues until the pump is switched off.

Let’s complicate matters a little by putting a constriction in the pipe – a small section where the diameter of the tube is narrower than anywhere else in the system. If we keep the same pressure difference created by the pump, then less water will go through because of the restriction – therefore, in order to get the same amount of water through the pipe as before, we’ll have to increase the pressure difference. So the higher the pressure difference, the higher the flow; the greater the restriction, the smaller the flow.

We’ll also have a situation where the pressure at the input to the restriction is different than that at the output. This is because the water molecules are bunching up at the point where they are trying to get through the smaller pipe. In fact the pressure at the output of the pump will be the same as the input of the restriction while the pressure at the input of the pump will match the output of the restriction. We could also say that there is a drop in pressure across the smaller diameter pipe.

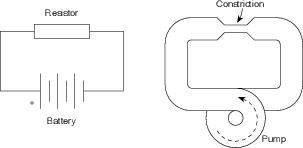

We can have almost exactly the same scenario with electricity instead of water. The electrical equivalent to the restriction is called a resistor. It’s a small component which resists the current, or flow of electrons. If we place a resistor in the wire, like the restriction in the pipe, we’ll reduce the current as is shown in Figure 2.3.

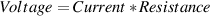

The higher the voltage difference, the higher the current. The bigger the resistor, the smaller the current. Just as in the case of the water, there is a drop in voltage (electrical “pressure”) across the resistor. The voltage at the output of the resistor is lower than that at its input. Normally this is expressed as an equation called Ohm’s Law which goes like this:

| (2.1) |

or

| (2.2) |

where V is in volts (abbreviated V), I is in amps (abbreviated A) and R is in ohms (abbreviated Ω).

We use this equation to define how much resistance we have. The rule is that 1 V of potential difference across a resistor will make 1 A of current flow through it if the resistor has a value of 1 Ω. An ohm is simply a measurement of how much the flow of electrons is resisted.

The equation is also used to calculate one component of an electrical circuit given the other two. For example, if you know the current through and the value of a resistor, the voltage drop across it can be calculated.

Everything resists the flow of electrons to different degrees. Copper doesn’t resist very much at all – in other words, it conducts electricity, so it is used as wiring; rubber, on the other hand, has a very high resistance, in fact it has an almost infinite resistance so we call it an insulator

If we return to the example of a pump creating flow through a pipe, it’s pretty obvious that this little system is useless for anything other than wasting the energy required to run the pump. If we were smart we’d put some kind of turbine or waterwheel in the pipe which would be connected to something which does work – any kind of work. Once upon a time a similar system was used to cut logs – connect the waterwheel to a circular saw; nowadays we do things like connecting generators to the turbine to generate electricity to power circular saws. In either case, we’re using the energy or power in the moving water to do something useful.

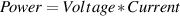

How can we measure or calculate how much work our waterwheel is capable of doing? Well, there are two variables involved with which we are concerned – the pressure behind the water and the quantity of water flowing through the pipe and turbine. If there is more pressure, there is more energy in the water to do stuff; if there is more flow, then there is more water to do stuff.

Electricity can be used in the same fashion – we can put a small device in the wire between the two battery terminals which will convert the power in the electrical current into some useful work like brewing coffee or powering an electric stapler. We have the same equivalent components to concern us, except now they are named current and voltage. The higher the voltage or the higher the current, the more energy in our system – therefore the power it has.

This relationship can be expressed by an equation called Watt’s Law which is as follows:

| (2.3) |

or

| (2.4) |

where P is in Watts, V is in volts and I is in amps.

Just as Ohm’s law defines the ohm, Watt’s law defines the watt to be the amount of power consumed by a device which, when supplied with 1 volt of difference across its terminals will use 1 amp of current.

We can create a variation on Watt’s law by combining it with Ohm’s law as follows:

P = VI and V = IR

therefore

and

Note that, as is shown in the equation above on the right, the power is proportional to the square of the voltage. This gem of wisdom will come in handy later.

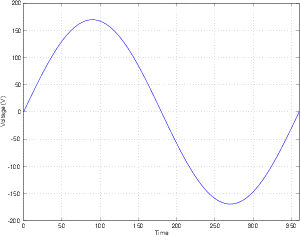

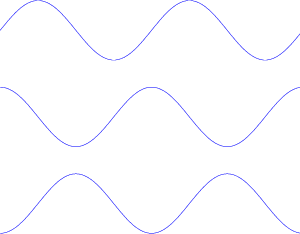

So far we have been talking about a constant supply of voltage – one that doesn’t change over time, such as a battery before it starts to run down. This is what is commonly know of as direct current or DC which is to say that there is no change in voltage over a period of time. This is not the kind of electricity found coming out of the sockets in your wall at home. The electricity supplied by your electricity company changes over short periods of time (it changes over long periods of time as well, but that’s an entirely different story...) Every second, the voltage difference between the two terminals in your wall socket fluctuates between about -170 V and 170 V sixty times a second (if you live in North America, at least...). This brings up two important points to discuss.

Firstly, the negative voltage... All a negative voltage means is that the electrons are flowing in a direction opposite to that being measured. There are more electrons in the tested point in the circuit than there are in the reference point, therefore more negative charge. If you think of this in terms of the two tanks of water – if we’re sitting at the bottom of the empty tank, and we measure the relative pressure of the full one, its pressure will be more, and therefore positive relative to your reference. If you’re at the bottom of the full tank and you measure the pressure at the bottom of the empty one, you’ll find that it’s less than your reference and therefore negative. (It’s like describing someone by their height. It doesn’t matter how tall or short someone is – if you say they’re tall, it probably means that they’re taller than you.)

Secondly, the idea that the voltage is fluctuating. When you plug your coffee maker into the wall, you’ll notice that the plug has two terminals. One is a reference voltage which stays constant (normally called a “cold” wire in this case...) and one is the “hot” wire which changes in voltage relative to the cold wire. The device in the coffee maker which is doing the work is connected with each of these two wires. When the voltage in the hot wire is positive in comparison to the cold wire, the current flows from hot through the coffee maker to cold. One one-hundred and twentieth of a second later the hot wire is negative compared to the cold, the current flows from cold to hot. This is commonly known as alternating current or AC.

So remember, alternating current means that both the voltage and the current are changing in time.

Look at a light bulb. Not directly – you’ll hurt your eyes – actually let’s just think of a lightbulb. I turn on the switch on my wall and that closes a connection which sends electricity to the bulb. That electricity flows through the bulb which is slightly resistive. The result of the resistance in the bulb is that it has to burn off power which it does by heating up – so much that it starts to glow. But remember, the electricity which I’m sending to the bulb is not constant – it’s fluctuating up and down between -170 and 170 volts. Since it takes a little while for the bulb to heat up and cool down, its always lagging behind the voltage change – actually, it’s so slow that it stays virtually constant in temperature and therefore brightness.

The light bulb does not respond to instantaneous voltage values – instead, it burns off an average amount of power over time. That average is essentially an equivalent DC voltage that would result in the same power dissipation. The question is, how do we calculate it?

First we’ll begin by looking at the average voltage delivered to your lightbulb by the electricity company. If we average the voltage for the full 360∘ of the sine wave that they provide to the outlets in your house, you’d wind up with 0 V – because the voltage is negative as much as it’s positive in the full wave – it’s symmetrical around 0 V. This is not a good way for the hydro company to decide on how much to bill you, because your monthly cost would be 0 dollars. (Sounds good to me – but bad to the electricity company...)

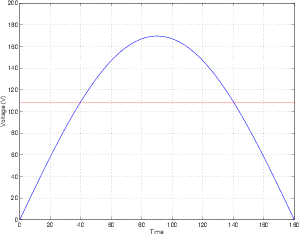

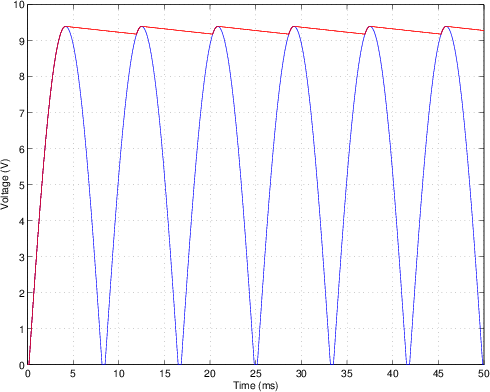

What if we only consider one half of a cycle of the 60 Hz waveform? Therefore, the voltage curve looks like the first half of a sine wave. There are 180∘ in this section of the wave. If we were to measure the voltage at each degree of the wave, add the results together and divide by 180 (in other words, find the average voltage) we would come up with a number which is 63.6% of the peak value of the wave. For example, the hydro company gives me a 170 volt peak sine wave. Therefore, the average voltage which I receive for the positive half of each wave is 170 V * 0.636 or 108.1 V as is shown in Figure 2.5.

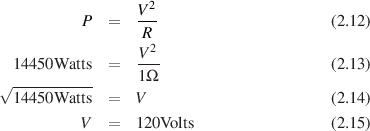

This does not, however give me the equivalent DC voltage level which would match my AC power usage, because our calculation did not bring power into account. In order to find this level, we have to complicate matters a little. We know from Watt’s law and Ohm’s law that P = V2∕R. Therefore, if we have an AC wave of 170Vpeak in a circuit containing a 1Ω resistor, the peak power consumption is

| (2.11) |

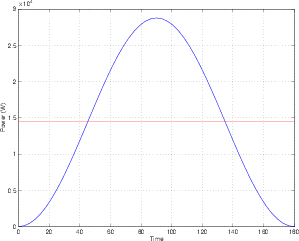

But this is the power consumption for one point in time, when the voltage level is actually at 170 V. The rest of the time, the voltage is either swinging on its way up to 170 V or on its way down from 170 V. The power consumption curve would no longer be a sine wave, but a sin2 wave. Think of it as taking all of those 180 voltage measurements (one for each degree) and squaring each one. From this list of 180 numbers (the instantaneous power consumption for each of the 180∘) we can find the average power consumed for a half of a waveform. This number turns out to be 0.5 of the peak power, or, in the above case, 0.5*28900 Watts, or 14450 W as is shown in Figure 2.6.

This gives us the average power consumption of the resistor, but what is the equivalent DC voltage which would result in this consumption? We find this by using Watt’s law in reverse as follows:

Therefore, 120 VDC would result in the same power consumption over a period of time as a 170 VAC wave. This equivalent is called the Root Mean Square or RMS of the AC voltage. We call it this because it’s the square root of the mean (or average) of the square of the original voltage.

In other words, a lightbulb in a lamp plugged into the wall (remember, it’s being fed 170Vpeak AC sine wave) will be exactly as bright if it’s fed 120 VDC.

Just for a point of reference, the RMS value of a sine wave is always 0.707 of the peak value and the RMS value of a square wave (with a 50% duty cycle) is always the peak value. If you use other waveforms, the relationship between the peak value and the RMS value changes.

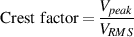

This relationship between the RMS and the peak value of a waveform is called the crest factor. This is a number that describes the ratio of the peak to the RMS of the signal, therefore

| (2.16) |

So, the crest factor of a sine wave is 1.41 (or  ). The crest factor of a square wave is

1.

). The crest factor of a square wave is

1.

This causes a small problem when you’re using a digital volt meter. The reading on these devices ostensibly show you the RMS value of the AC waveform you’re measuring, but they don’t really measure the RMS value. They measure the peak value of the wave, and then multiply that value by 0.707 – therefore they’re assuming that you’re measuring a sine wave. If the waveform is anything other than a sine, then the measurement will be incorrect (unless you’ve thrown out a ton of money on a True RMS multimeter...)

There’s just one small problem with this explanation. We’re talking about an RMS value of an alternating voltage being determined in part by an average of the instantaneous voltages over a period of time called the time constant. In Figure 2.6, we’re assuming that the signal is averaged for at least one half of one cycle for the sine wave. If the average is taken for anything more than one half of a cycle, then our math will work out fine. What if this wasn’t the case, however? What if the time constant was shorter than one half of a cycle?

Take a look at the signal in Figure 2.7. This signal usually has a pretty low level, but there’s a spike in the middle of it. This signal is comprised of a string of 1000 values, numbered from 1 to 1000. If we assume that this a voltage level, then it can be converted to a power value by squaring it (we’ll keep assuming that the resistance is 1 Ω). That power curve is shown in Figure 2.8.

Now, let’s make a running average of the values in this signal. One way to do this would be to take all 1000 values that are plotted in Figure 2.8 and find the average. Instead, let’s use an average of 100 values (the length of this window in time is our time constant). So, the first average will be the values 1 to 100. The second average will be 2 to 101 and so on until we get to the average of values 901 to 1000. If these averages are plotted, they’ll look like the graph in Figure 2.9.

There are a couple of things to note about this signal. Firstly, notice how the signal gradually ramps in at the beginning. This is because, as the time window that we’re using for the average gradually “slides” over the transition from no signal to a low-level signal, the total average gradually increases. Also notice that what was a very short, very high level spike in the signal in the instantaneous power curve becomes a very wide (in fact, the width of the time constant), much lower-level signal (notice the scale of the y-axis). This is because the short spike is just getting thrown into an average with a lot of low-level signals, so the RMS value is much lower. Finally, the end ramps out just as the beginning ramped in for the same reasons.

So, we can now see that the RMS value is potentially much smaller than the peak value, but that this relationship is highly dependent on the time constant of the RMS detection. The shorter the time constant, the closer the RMS value is to the instantaneous peak value (in fact, if the time constant was infinitely short, then the RMS would equal the peak...).

The moral of the story is that it’s not enough to just know that you’re being given the RMS value, you’ll also need to know what the time constant of that RMS value is.

Fundamentals of Service: Electrical Systems, John Deere Service Publication

Basic Electricity, R. Miller

The Incredible Illustrated Electricity Book, D.R. Riso

Elements of Electricity, W.H. Timbie

Introduction to Electronic Technology, R.J. Romanek

Electricity and Basic Electronics, S.R. Matt

Electricity: Principles and Applications, R. J. Fowler

Basic Electricity: Theory and Practice, M. Kaufman and J.A. Wilson

Thanks to Mr. Ray Rayburn for his proofreading of and suggestions for this section.

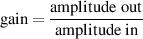

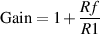

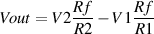

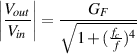

Lesson 1 for almost all recording engineers comes from the classic movie “Spinal Tap” where we all learned that the only reason for buying any piece of audio gear is to make things louder (“It goes all the way up to 11...”) The amount by which a device makes a signal louder or quieter is called the gain of the device. If the output of the device is two times the amplitude of the input, then we say that the device has a gain of 2. This can be easily calculated using Equation 2.17.

| (2.17) |

Note that you can use gain for evil as well as good - you can have a gain of less than 1 (but more than 0) which means that the output is quieter than the input.

If the gain equals 1, then the output is identical to the input.

If the gain is 0, then this means that the output of the device is 0, regardless of the input.

Finally, if the device has a negative gain, then the output will have an opposite polarity compared to the input. (As you go through this section, you should always keep in mind that a negative gain is different from a gain with a negative value in dB... but we’ll straighten this out as we go along.)

(Incidentally, Lesson 2 for recording engineers, entitled “How to wrap a microphone cable with one hand while holding a chili dog and a styrofoam cup of black coffee with 5 sugars in the other hand and not spill anything on your Twisted Sister World Tour T-shirt” will be addressed in a later chapter.)

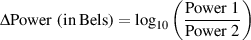

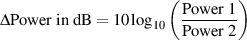

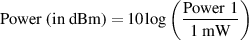

Sound in the air is a change in pressure. The greater the change, the louder the sound. The softest sound you can hear according to the books is 20*10-6 (or 0.00002) Pascals (abbreviated “Pa”) (it doesn’t really matter how big a Pa is – you just need to know the number for now...)3 . The loudest sound you can tolerate without screaming in pain is about 200000000*10-6 Pa (or 200 Pa). This ratio of the loudest sound to the softest sound is therefore a 10,000,000:1 ratio (the loudest sound is 10,000,000 times louder than the softest). This range is simply too big to put on the fader of a mixing console. So a group of people at Bell Labs decided to represent the same scale with smaller numbers. They arrived at a unit of measurement called the Bel (named after Alexander Graham Bell – hence the capital B.) The Bel is a measurement of power difference. It’s really just the logarithm of the ratio of two powers (Power1:Power2 or Power1/Power2). So to find out the difference in two power measurements measured in Bels (B). We use the following equation.

| (2.18) |

Let’s leave the subject for a minute and talk about measurements. Our basic unit of length is the metre (m). If I were to talk about the distance between the wall and me, I would measure that distance in metres. If I were to talk about the distance between Newfoundland and me, I would not use metres, I would use kilometres. Why? Because if I were to measure the distance between Newfoundland and my house in Denmark in metres the number would be something like 4,176,120 m. This number is too big, so I say I’ll measure it in kilometres. I know that 1 km = 1000 m therefore the distance between Newfoundland and me is 4,176,120 m / 1 000 m/km = 4,176 km. The same would apply if I were measuring the length of a pencil. I would not use metres because the number would be something like 0.15 m. It’s easier to think in centimetres or millimetres for small distances – all we’re really doing is making the number look nicer.

The same applies to Bels. It turns out that if we use the above equation, we’ll start getting small numbers. Too small for comfort; so instead of using Bels, we use decibels or dB. Now all we have to do is convert.

There are 10 dB in a Bel, so if we know the number of Bels, the number of decibels is just 10 times that. So:

| (2.19) |

| (2.20) |

So that’s how you calculate dB when you have two different amounts of power and you want to find the difference between them. The point that I’m trying to overemphasize thus far is that we are dealing with power measurements. We know that power is measured in watts (or Joules per second if you’re reading an older book) so we use the above equation only when the ratio is comparing two measurements in watts.

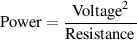

What if we wanted to calculate the difference between two voltages (or electrical pressures)? Well, Watt’s Law says that:

| (2.21) |

or

| (2.22) |

Therefore, if we know our two voltages (V1 and V2) and we know the resistance stays the same:

That’s it! (Finally!) So, the moral of the story is, if you want to compare two voltages and express the difference in dB, you have to go through that last equation.

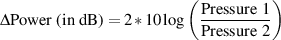

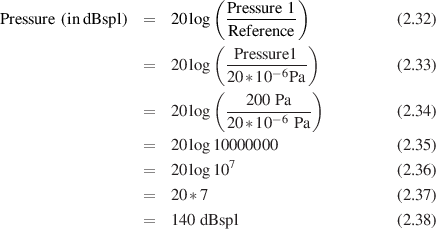

Remember, voltage is analogous to pressure. So if you want to compare two pressures (like 20*10-6 Pa and 200000000*10-6 Pa) you have to use the same equation, just substitute V1 and V2 with P1 and P2 like this:

| (2.31) |

This is all well and good if you have two measurements (of power, voltage or pressure) to compare with each other, but what about all those books that say something like “a jet at takeoff is 140 dB loud.” What does that mean? Well, what it really means is “the sound a jet makes when it’s taking off is 140 dB louder than...” Doesn’t make a great deal of sense... Louder than what? The first measurement was the sound pressure of the jet taking off, but what was the second measurement with which it’s compared?

This is where we get into variations on the dB. There are a number of different types of dB which have references (second measurements) already supplied for you. We’ll do them one by one.

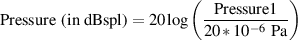

The dBspl is a measurement of sound pressure (spl stand for Sound Pressure Level). What you do is take a measurement of, say, the sound pressure of a jet at takeoff (measured in Pa). This provides Pressure1. Our reference Pressure2 is given as the sound pressure of the softest sound you can hear, which we have already said is 20*10-6 Pa.

Let’s say we go to the end of an airport runway with a sound pressure meter and measure a jet as it flies overhead. Let’s also say that, hypothetically, the sound pressure turns out to be 200 Pa. Let’s also say we want to calculate this into dBspl. So, the sound of a jet at takeoff is :

So what we’re saying is that a jet taking off is 140 dBspl which means “the sound pressure of a jet taking off is 140 dB louder than the softest sound I can hear.”

This issue is covered in Section 5.4.2.

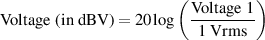

When you’re measuring sound pressure levels, you use a reference based on the threshold of hearing (20*10-6 Pa) which is fine, but what if you want to measure the electrical power output of a piece of audio equipment? What is the reference that you use to compare your measurement? Well, in 1939, a bunch of people sat down at a table and decided that when the needles on their equipment read 0 VU, then the power output of the device in question should be 0.001 W or 1 milliwatt (mW). Now, remember that the power in watts is dependent on two things – the voltage and the resistance (Watt’s law again). Back in 1939, the impedance of the input of every piece of audio gear was 600Ω. If you were Sony in 1939 and you wanted to build a tape deck or an amplifier or anything else with an input, the impedance across the input wire and the ground in the connector would have to be 600Ω.

As a result, people today (including me until my error was spotted by Ray Rayburn) believe that the dBm measurement uses two standard references – 1 mW across a 600Ω impedance. This is only partially the case. We use the 1 mW, but not the 600Ω. To quote John Woram, “...the dBm may be correctly used with any convenient resistance or impedance.” [Woram, 1989]

By the way, the m stands for milliwatt.

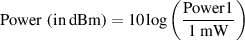

Now this is important: since your reference is in mW we’re dealing with power. Decibels are a measurement of a power difference, therefore you use the following equation:

| (2.39) |

Where Power1 is measured in mW.

What’s so important? There’s a 10 in there and not a 20. It would be 20 if we were measuring pressure, either sound or electrical, but we’re not. We’re measuring power.

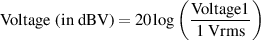

Nowadays, the 600Ω specification doesn’t apply anymore. The input impedance of a tape deck you pick up off the shelf tomorrow could be anything – but it’s likely to be pretty high, somewhere around 10 kΩ. When the impedance is high, the dissipated power is low, because power is inversely proportional to the resistance. Therefore, there may be times when your power measurement is quite low, even though your voltage is pretty high. In this case, it makes more sense to measure the voltage rather than the power. Now we need a new reference, one in volts rather than watts. Well, there’s actually two references... The first one is 1 Vrms. When you use this reference, your measurement is in dBV.

So, you measure the voltage output of your piece of gear – let’s say a mixer, for example, and compare that measurement with the 1 Vrms reference, using the following equation.

| (2.40) |

Where Voltage1 is measured in Vrms.

Now this time it’s a 20 instead of a 10 because we’re measuring pressure and not power. Also note that the dBV does not imply a measurement across a specific impedance.

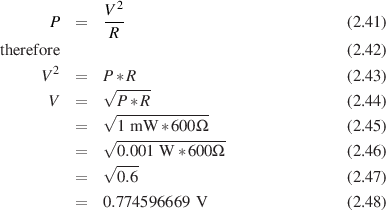

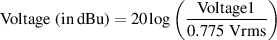

Let’s think back to the 1mW into 600Ω situation. What will be the voltage required to generate 1mW in a 600Ω resistor?

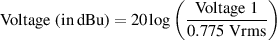

Therefore, the voltage required to generate the reference power was about 0.775 Vrms. Nowadays, we don’t use the 600Ω impedance anymore, but the rounded-off value of 0.775 Vrms was kept as a standard reference. So, if you use 0.775 Vrms as your reference voltage in the equation like this:

| (2.49) |

your unit of measure is called dBu. Where Voltage1 is measured in Vrms.

(It used to be called dBv, but people kept mixing up dBv with dBV and that couldn’t continue, so they changed the dBv to dBu instead. You’ll still see dBv occasionally – it is exactly the same as dBu... just different names for the same thing.)

Remember – we’re still measuring pressure so it’s a 20 instead of a 10, and, like the dBV measurement, there is no specified impedance.

The dBFS designation is used for digital signals, so we won’t talk about them here. They’re discussed later in Chapter 10.1.

Once upon a time you may have learned that “professional” gear ran at a nominal operating level of +4 dB compared to “consumer” gear at only -10 dB. (Nowadays, this seems to be the only distinction between the two...) What few people ever notice is that this is not a 14 dB difference in level. If you take a piece of consumer gear outputting what it thinks is 0 dB VU (0 dB on the VU meter), and you plug it into a piece of pro gear, you’ll find that the level is not -14 dB but -11.79 dB VU... The reason for this is that the professional level is +4 dBu and the consumer level -10 dBV. Therefore we have two separate reference voltages for each measurement.

0 dB VU on a piece of pro gear is +4 dBu which in turn translates to an actual voltage level of 1.228 Vrms. In comparison, 0 dB VU on a piece of consumer gear is -10 dBV, or 0.316 Vrms. If we compare these two voltages in terms of decibels, the result is a difference of 11.79 dB.

One other thing to remember is that, typically, professional gear uses balanced signals (this term will be discussed and defined in Section 6.5.2) on XLR connectors whereas consumer gear uses unbalanced signals on phono (RCA) or 1/4” jacks. If you’re measuring, then the +4 dBu signal is between the hot and cold pins on the XLR (pins 2 and 3 respectively). If you measure between either of those pins and ground (pin 1) then you’ll be 6 dB lower. In the case of consumer gear, you measure between the signal (the pin on a phono connector, the tip on a 1/4” jack) and ground.

| (2.50) |

where Pressure1 is measured in Pa.

| (2.51) |

where Power1 is measured in mW.

| (2.52) |

where Voltage1 is measured in Vrms.

| (2.53) |

where Voltage1 is measured in Vrms.

Picture it – you’re getting a shower one morning and someone in the bathroom downstairs flushes the toilet... what happens? You scream in pain because you’re suddenly deprived of cold water in your comfortable hot / cold mix... Not good. Why does this happen? It’s simple... It’s because you were forced to share cold water without being asked for permission. Essentially, we can pretend (for the purposes of this example...) that there is a steady pressure pushing the flow of water into your house. When the shower and the toilet are asking for water from the same source (the intake to your house) then the water that was normally flowing through only one source suddenly goes in two directions. The flow is split between two paths.

How much water is going to flow down each path? That depends on the amount of resistance the water “sees” going down each path. The toilet is probably going to have a lower “resistance” to impede the flow of water than your shower head, and so more water flows through the toilet than the shower. If the resistance of the shower was smaller than the toilet, then you would only be mildly uncomfortable instead of jumping through the shower curtain to get away from the boiling water... In addition, the toilet would take longer to fill than it usually does when no one is showering.

Think back to the tanks connected by a pipe with a restriction in it described in Chapter 2.1. All of the water flowing from one tank to the other must flow through the pipe, and therefore, through the restriction. The flow of the water is, in fact, determined by the resistance of the restriction (we’re assuming that the pipe does not impede the flow of water... just the restriction...)

What would happen if we put a second restriction on the same pipe? The water flowing through the pipe from tank to tank now “sees” a single, bigger resistance, and therefore flows more slowly through the entire pipe.

The same is true of an electrical circuit. If we connect two resistors, end to end, and connect them to a battery as is shown in the diagram below, the current must flow from the positive battery terminal through the fist resistor, through the second resistor, and into the negative terminal of the battery. Therefore the current leaving the positive terminal “sees” one big resistance equivalent to the added resistances of the two resistors.

What we are looking at here is called an example of resistors connected in series. What this essentially means is that there are a series of resistors that the current must flow through in order to make its way through the entire circuit.

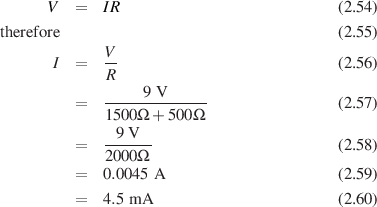

So, how much current will flow through this system? That depends on the two resistances. If we have a 9 V battery, a 1.5 kohm resistor and a 500 Ω resistor, then the total resistance is 2 kohms. From there we can just use Ohm’s law to figure out the total current running through the system.

Remember that this is not only the current flowing through the entire system, it’s also therefore the current running through each of the two resistors. This piece of information allows us to go on to calculate the amount of voltage drop across each resistor.

Since we know the amount of current flowing though each of the two resistors, we can use Ohm’s law to calculate the voltage drop across each of them. Going back to our original example used above, and if we label our resistors. (R1 = 1.5 kΩ and R2 = 500Ω)

V1 = I1R1 (This means the voltage drop across R1 = the current through R1 times the resistance of R1)

Therefore the voltage drop across R1 is 6.75 V.

Now we can do the same thing for R2 ( remember, it’s the same current...)

So the voltage drop across R2 is 2.25 V. An interesting thing to note here is that the voltage drop across R1 and the voltage drop across R2, when added together, equal 9 V. In fact, in this particular case, we could have simply calculated one of the two voltage drops and then simply subtracted it from the voltage produced by the battery to find the second voltage drop.

Now let’s connect the same two resistors to the battery slightly differently. We’ll put them side by side, parallel to each other, as shown in the diagram below. This configuration is called parallel resistors and their effect on the current and voltage in the circuit is somewhat different than when they were in series...

Look at the connections between the resistors and the battery. They are directly connected, therefore we know that the battery is ensuring that there is a 9 V voltage drop across each of the resistors. This is a state imposed by the battery, and you simply expected to accept it as a given... (just kidding...)

The voltage difference across the battery terminals is 9 V – this is a given fact which doesn’t change whether they are connected with a resistor or not. If we connect two parallel resistors across the terminals, they still stay 9 V apart.

If this is causing some difficulty, think back to the example at the top of this page where we had a shower running while a toilet was flushing in the same house. The water pressure supplied to the house didn’t change... It’s the same thing with a battery and two parallel resistors.

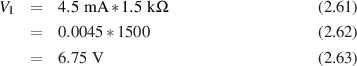

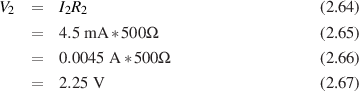

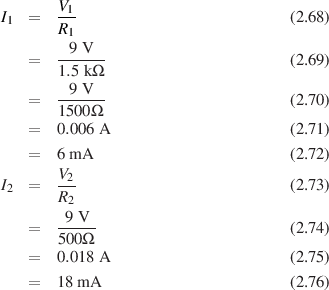

Since we know the amount of voltage applied across each resistor (in this case, they’re both 9 V) then we can again use Ohm’s law to determine the amount of current flowing though each of the two resistors.

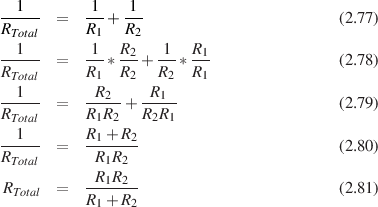

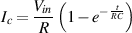

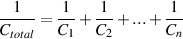

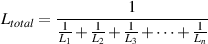

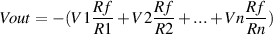

One way to calculate the total current coming out of the battery here is to calculate the two individual currents going through the resistors, and adding them together. This will work, and then from there, we can calculate backwards to figure out what the equivalent resistance of the pair of resistors would be. If we did that whole procedure, we would find that the reciprocal of the total resistance is equal to the sum of the reciprocals of the individual resistors. (huh?) It’s like this...

Let’s go back a couple of chapters to the concept of a water pump sending water out its output through a pipe which has a constriction in it back to the input of the pump. We equated this system with a battery pushing current through a wire and resistor. Now, we’re replacing the restriction in the water pipe with a couple of waterbeds. Stay with me here – this will make sense, I promise.

If the input of the water pump is connected to one of the waterbeds and the output of the pump is connected to the other waterbed, and the output waterbed is placed on top of the input waterbed, what will happen? Well, if we assume that the two waterbeds have the same amount of water in them before we turn on the pump (therefore the water pressure in the two are the same... sort of...) , then, after the pump is turned on, the water is drained from the bottom waterbed and placed in the top waterbed. This means that we have a change in the pressure difference between the two beds (The upper waterbed having the higher pressure). This difference will increase until we run out of water for the pump to move. The work the pump is doing is assisted by the fact that, as the top waterbed gets heavier, the water is pushed out of the bottom waterbed. Now, what does this have to do with electricity?

We’re going to take the original circuit with the resistor and the battery and we’re going to add a device called a capacitor in series with the resistor. A capacitor is a device with two metal plates that are placed very close together, but without touching. There’s a wire coming off of each of the two plates. Each of these plates, then can act as a reservoir for electrons – we can push extra ones into a plate, making it negative (by connecting the negative terminal of a battery to it), or we can take electrons out, making the plate positive (by connecting the positive terminal of the battery to it). Remember though that electrons, and the lack-of-electrons (holes) are mutually attracted to each other. As a result, the extra electrons in the negative plate are attracted to the holes in the positive plate. This means that the electrons and holes line up on the sides of the plates closest to the opposite plate – trying desperately to get across the gap. The narrower the gap, the more attraction, therefore the more electrons and holes we can pack in the plates. Also, the bigger the plates, the more electrons and holes we can get in there.

This device has the capacity to store quantities of electrons and holes – that’s why we call them capacitors. The value of the capacitor, measured in Farads (abbreviated F) is a measure of its capacity to hold electrons (we’ll leave it at that for now). That capacitance is determined by two things essentially – both physical attributes of the device. The first is the size of the plates – the bigger the plates, the bigger the capacitance. For big caps, we take a couple of sheets of metal foil with a piece of non-conductive material sandwiched between them (called the dielectric) and roll the whole thing up like a sleeping bag being stored for a hike – put the whole thing in a little can and let the wires stick out of the ends (or one end). The second attribute controlling the capacitance is the gap between the plates (the smaller the gap, the bigger the capacitance).

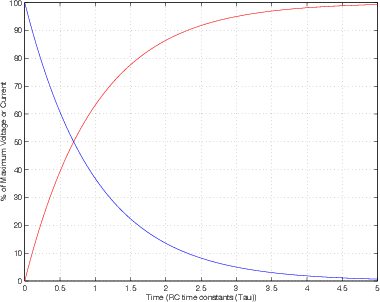

The reason we use these capacitors is because of a little property that they have which could almost be considered a problem – you can’t dump all the electrons you want to through the wire into the plate instantaneously. It takes a little bit of time, especially if we restrict the current flow a bit with a resistor. Let’s take a circuit as an example. We’ll connect a switch, a resistor, and a capacitor all in series with a battery, as is shown in Figure 2.12.

Just before we close the switch, let’s assume that the two plates of the capacitor have the same number of electrons and holes in them – therefore they are at the same potential – so the voltage across the capacitor is 0 V (In other words, they have the same electrical pressure.). When we close the switch, the electrons in the negative terminal want to flow to the top plate of the cap to meet the holes flowing into the bottom plate. Therefore, when we first close the switch, we get a surge of current through the circuit which gradually decreases as the voltage across the capacitor is increased. The more the capacitor fills with holes and electrons. the higher the voltage across it, and therefore the smaller the voltage across the resistor – this in turn means a smaller current.

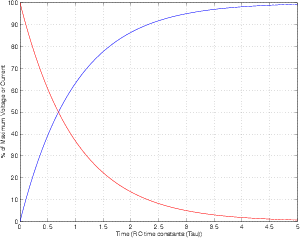

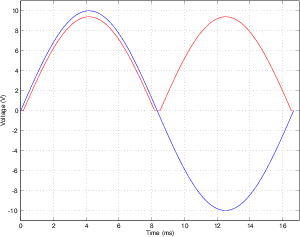

If we were to graph this change in the flow of current over time, it would look like the red line in Figure 2.13:

As you can see, the longer in time after the switch has been closed, the smaller the current. The graph of the change in voltage over time would be exactly opposite to this, plotted as the blue line in Figure 2.13.

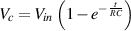

In case you want to be a geek and calculate these values, you can use the following equations – I’m just putting these two in for reference purposes, not because you need to know or understand them:

| (2.82) |

| (2.83) |

Where Vc is the instantaneous voltage across the capacitor, Vin is the instantaneous voltage applied to the whole circuit, e is approximately 2.718, R is the resistance of the resistor in Ω, C is the capacitance of the capacitor in Farads, Ic is the instantaneous current flowing through the resistor and into the capacitor and t is time in seconds.

You may notice that in most books, the time axis of the graph is not marked in seconds but in something that looks like a τ – it’s called tau (that’s a Greek letter and not a Chinese word, in case you’re thinking that I’m going to make a joke about Winnie the Pooh... It’s also pronounced differently – say “tao” not “dao”). Tau is the symbol for something called a time constant, which is determined by the value of the capacitor and the resistor, as in Equation 2.84:

| (2.84) |

As you can see, if either the resistance or the capacitance is increased, the RC time constant goes up. “But what’s a time constant?” I hear you cry... Well, a time constant is the time it takes for the voltage to reach 63.2% of the voltage applied to the capacitor. After 2 time constants, we’ve gone up 63.2% and then 63.2% of the remaining 36.8%, which means we’re at 86.5%... Once we get to 5 time constants, we’re at 99.3% of the voltage and we can consider ourselves to have reached our destination. (In fact, we never really get there – we just keep approaching the voltage forever.)

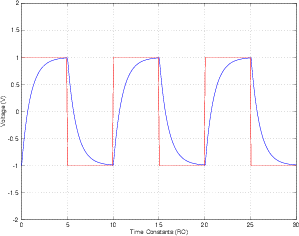

So, this is all very well if our voltage source is providing us with a suddenly applied DC, but what would happen if we replaced our battery with a square wave and monitored the voltage across and the current flowing into the capacitor? Well, the output would look something like Figure 2.14 (assuming that the period of the square wave = 10 time constants).

What’s going on? Well, the voltage is applied to the capacitor, and it starts charging, initially demanding lots of current through the resistor, but asking for less and less all the time. When the voltage drops to the lower half of the square wave, the capacitor starts charging (or discharging) to the new value, initally demanding lots of current in the opposite direction and slowly reaching the voltage. Since I said that the period of the square wave is 10 time constants, the voltage of the capacitor just reaches the voltage of the function generator (5 time constants...) when the square wave goes to the other value.

Consider that, since the circuit is rounding off the square edges of the initially applied square wave, it must be doing something to the frequency response – but we’ll worry about that later.

Let’s now apply an AC sine wave to the input of the same circuit and look at what’s going on at the output. The voltage of the function generator is always changing, and therefore the capacitor is always being asked to change the voltage across it. However, it is not changing nearly as quickly as it was with the square wave. If the change in voltage over time is quite slow (therefore, a low frequency sine wave) the current required to bring the capacitor to its new (but always changing) voltage will be small. The higher the frequency of the sine wave at the input, the more quickly the capacitor must change to the new voltage, therefore the more current it demands. Therefore, the current flowing through the circuit is dependent on the frequency – the higher the frequency, the higher the current. If we think of this another way, we could pretend that the capacitor is a resistor which changes in value as the frequency changes – the lower the frequency, the bigger the resistor, because the smaller the current. This isn’t really what’s going on, but we’ll work that out in a minute.

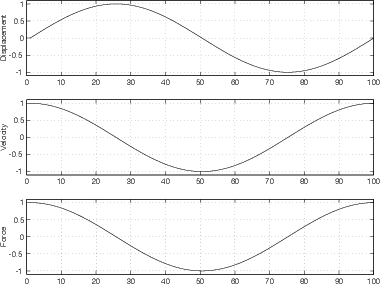

The lower the frequency, the lower the current – the smaller the capacitor the lower the current (because it needs less current to change to the new voltage than a bigger capacitor). Therefore, we have a new equation which describes this relationship:

| (2.85) |

Where f is the frequency in Hz, C is the capacitance in Farads, and π is 3.14159264...

What’s XC? It’s something called the capacitive reactance of the capacitor, and it’s expressed in Ω. It’s not the same as resistance for two reasons – firstly, resistance burns power (lost as heat) if it’s resisting the flow of current; when current is impeded by capacitic reactance, there is no power lost. It’s also different from a resistor becasue there is a different relationship between the voltage and the current flowing through (or into) the device. For resistors, Ohm’s Law tells us that V=IR, therefore if the resistor stays the same and the voltage goes up, the current goes up at the same time. Therefore, we can say that, when an AC voltage is applied to a resistor, the flow of current through the resistor is in phase with the voltage. (when V is 0, I is 0, when V is maximum, I is maximum and so on). In a capacitive circuit (one where the reactance of the capacitor is much greater than the resistance of the resistor and the two are in ...) the current preceeds the voltage (remember the time constant curves – voltage changes slowly, current changes quickly...) by 90∘. This also means that the voltage across the resistor is 90∘ ahead of the voltage across the capacitor (because the voltage across the resistor is in phase with the current through it and into the capacitor).

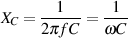

If this is a little tough to follow, try thinking of it a different way... Stand in a swimming pool up to your neck in water and take a dinner plate and hold it in your hands like you would hold the steering wheel of a car. Now start pushing and pulling the plate forwards and backwards – you’ll notice that this is hard to do because the water in the pool resists the movement of the plate. Now think about the relationship between where the plate is (its displacement), its speed and direction of travel (its velocity), and whether you’re pushing or pulling (your force). These three things are illustrated (but not to scale) in Figure 2.15. Notice that while the plate is moving away from you, you’re pushing. This is true whether the plate is near to you or far from you – your force is dependent on the velocity of the plate. One other thing to ask is where all your hard work is going – it’s being used to push water out of the way. In other words, you’re doing a lot of work for nothing.

Now get out of the pool and stand in front of a concrete wall with a spring sticking straight out of it. Glue the bottom of your dinner plate to the spring so that if you push the plate, it moves towards the wall and squeezes the spring. If you pull the plate, it moves towards you away from the wall, and expands the spring. Now think about the relationship between the plate’s displacement and velocity and your force once more. This is illustrated in Figure 2.16. You’ll notice that it’s a little different than when you were in the swimming pool. Now, your force is dependent on the displacement of the plate (since you’re doing all the work to overcome the force of the spring). If the plate has moved away from you, you’re pushing to overcome the compression of the spring – this is true regardless of whether the plate is moving away from or towards you. In addition, the force that you’re putting on the plate is not lost. You’re storing energy in the spring instead of just losing it as you were in the swimming pool

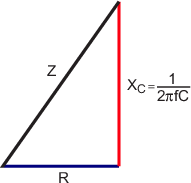

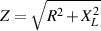

Let’s get back to the circuit we were talking about before all of this dinner plate stuff... As far as the function generator is concerned, it doesn’t know whether the current it’s being asked to supply is determined by resistance or reactance – all it sees is some THING out there, impeding the current flow differently at different frequencies (the lower the frequency, the higher the impedance). This impedance is not simply the addition of the resistance and the reactance, because the two are not in phase with each other – in fact they’re 90∘ out of phase. The way we calculate the total impedance of the circuit is by finding the square root of the sum of the squares of the resistance and the reactance or :

| (2.86) |

Where Z is the impedance of the RC combination, R is the resistance of the resistor, and XC is the capacitive reactance, all expressed in Ω.

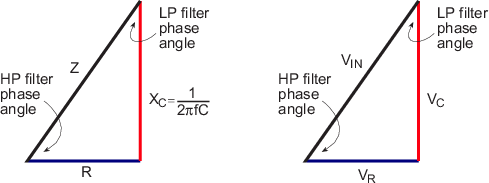

Remember back to Pythagoras – that same equation above is the one we use to find the length of the hypotenuse of a right triangle (a triangle whose legs are 90∘ apart) when we know the lengths of the legs. Get it? Voltages are 90∘ apart, legs are 90∘ apart. If you don’t get it, not to worry, it’s explained in Section 2.5.

Also, remember that, as frequency goes up, the XC goes down, and therefore the Z goes down. If the frequency is 0 Hz (or DC) then the XC is ∞ Ω, and the circuit is no longer closed – no current will flow. This will come in handy in the next chapter.

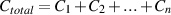

As for the combination of capacitors in series and parallel, it’s exactly the same equations as for resistors except that they’re opposite. If you put two capacitors in parallel – the total capacitance is bigger... in fact it’s the addition of the two capacitances (because you’re effectively making the plates bigger). Therefore, in order to calculate the total capacitance for a number of capacitors connected in parallel, you use Equation 2.87.

| (2.87) |

If the capacitors are in series, then you use the equation

| (2.88) |

Note that both of these equations are very similar to the ones for resistors, except that we use them “backwards.” That is to say that the equations for series resistors is the same as for parallel capacitors, and the one for parallel resistors is the same as for series capacitors.

There is a specific type of capacitor called an electrolytic capacitor – so called because it uses an electrolyte (go look it up...) as the material for one of its plates. This increases the capacitance of the unit (relative to cap’s that are not electrolytic) for its size, so you get a bigger capacitor in a smaller package. Because they usually have a large capacitance, they are usually used in low-frequency circuits (like power supplies, as we’ll see later) or in keeping DC out of circuits (as we’ll see later).

The only problem with electrolytic capacitors is that they are usually polarised. This means that they’re only happy when one plate is at a higher voltage than the other. When this is true, you’ll see a ”+” sign somewhere on the cap near one of its two terminals itself to indicate that that terminal should be connected to the higher voltage. (It might be a ”-” sign instead – you figure it out...) Note that if you do not make sure that this is obeyed, then th capacitor will start to heat up internally, then its contents will start to boil. Since the cap is sealed, this will cause a build-up in pressure that will, eventually, be released... usually with some violence.4

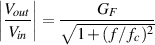

In the last chapter, we looked at the circuit similar to that in Figure 2.17 and we talked about the impedance of the RC combination as it related to frequency. Now we’ll talk about how to harness that incredible power to screw up your audio signal.

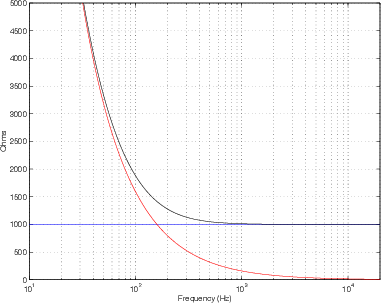

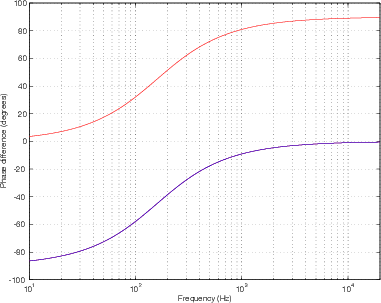

In this circuit, the lower the frequency, the higher the impedance, and therefore the lower the current flowing through the circuit. If we’re at 0 Hz, there is no current flowing through the circuit. If we’re at ∞ Hz (this is very high...) then the capacitor has a capacitive reactance of 0 Ω, and the impedance of the circuit is the resistance of the resistor. This can be seen in Figure 2.18.

We also talked about how, at low frequencies, the circuit is considered to be capacitive (because the capacitive reactance is MUCH greater than the resistor value and therefore the resistor is negligible in comparison.).

When the circuit is capacitive, the current flowing through the resistor into the capacitor is changing faster than the voltage across the capacitor. We said in the previous chapter, that, in this case, the current is 90∘ ahead of the voltage. This also means that the voltage across the resistor (which is in phase with the current) is 90∘ ahead of the voltage across the capacitor. This is shown in Figure 2.19.

Let’s look at the voltage across the capacitor as we change the voltage. At very low frequencies, the capacitor has a very high capacitive reactance, therefore the resistance of the resistor is negligible in comparison. If we consider the circuit to be a voltage divider (where the voltage is divided between the capacitor and the resistor) then there will be a much larger voltage drop across the capacitor than the resistor. At DC (0 Hz) the XC is infinite, and the voltage across the capacitor is equal to the voltage output from the function generator. Another way to consider this is, if XC is infinite, then there is no current flowing through the resistor, therefore there is no voltage drop across is (because 0 V = 0 A * R). If the frequency is higher, then the reactance is lower, and we have a smaller voltage drop across the capacitor. The higher we go, the lower the voltage drop until, at ∞ Hz, we have 0 V.

If we were to plot the voltage drop across the capacitor relative to the frequency, it would, therefore produce a graph like Figure 2.20.

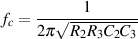

Note that we’re specifying the voltage as a level relative to the input of the circuit, expressed in dB. The frequency at which the output (the voltage drop across the capacitor) is 3 dB below the input (that is to say -3 dB) is called the cutoff frequency (fc) of the circuit. (We may as well start calling it a filter, since it’s filtering different frequencies differently... since it allows low frequencies to pass through unchanged, we’ll call it a low-pass filter.)

The fc of the low-pass filter can be calculated if you know the values of the resistor and the capacitor. The equation is shown in Equation 2.89:

| (2.89) |

(Note that if we put the values of the resistor and the capacitor from Figure 2.17 – R = 1 kΩ and C = 1 μF ) into this equation, we get 159 Hz. This is the frequency where R = XC. This is also where the relationship between the input, and the voltages across the resistor and capacitor behave as is shown in Figure 2.19.)

Where fc is expressed in Hz, R is in Ω and C is in Farads.

At frequencies below  (1 decade below fc – musicians like to think in octaves – 2

times the frequency – engineers like to think in decades, 10 times the frequency) we

consider the output to be equal to the input – therefore at 0 dB. At frequencies 1 decade

above fc and higher, we drop 6 dB in amplitude every time we go up 1 octave, so we say

that we have a slope of -6 dB per octave (this is also expressed as -20 dB per decade – it

means the same thing)

(1 decade below fc – musicians like to think in octaves – 2

times the frequency – engineers like to think in decades, 10 times the frequency) we

consider the output to be equal to the input – therefore at 0 dB. At frequencies 1 decade

above fc and higher, we drop 6 dB in amplitude every time we go up 1 octave, so we say

that we have a slope of -6 dB per octave (this is also expressed as -20 dB per decade – it

means the same thing)

We also have to consider, however, that the change in voltage across the capacitor isn’t always keeping up with the change in voltage across the function generator. In fact, at higher frequencies, it lags behind the input voltage by 90∘. Up to 1 decade below fc, we are in phase with the input, at fc, we are 45∘ behind the input voltage, and at 1 decade above fc and higher, we are lagging by 90∘. The resulting graph looks like Figure 2.21 :

As is evident in the graph, a lag in the sine wave is expressed as a positive phase, therefore the voltage across the capacitor goes from 0∘ to 90∘ relative to the input voltage.

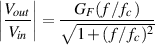

While all that is going on, what’s happening across the resistor? Well, since we’re considering that this circuit is a fancy type of voltage divider, we can say that if the voltage across the capacitor is high, the voltage across the resistor is low – if the voltage across the capacitor is low, then the voltage across the resistor is high. Another way to consider this is to say that if the frequency is low, then the current through the circuit is low (because XC is high) and therefore Vr is low. If the frequency is high, the current is high (because XC is low) and Vr is high.

The result is Figure 2.20, showing the voltage across the resistor relative to frequency. Again, we’re plotting the amplitude of the voltage as it relates to the input voltage, in dB.

Now, of course, we’re looking at a high-pass filter. The fc is again the frequency where we’re at -3 dB relative to the input, and the equation to calculate it is the same as for the low-pass filter.

| (2.90) |

The slope of the filter is now 6 dB per octave (20 dB per decade) because we increase by 6 dB as we go up one octave... That slope holds true for frequencies up to 1 decade below fc. At frequencies more than one decade above fc (in mathematical terms, 10 fc), we are at 0 dB relative to the input.

The phase response is also similar but different. Now the sine wave that we see

across the resistor is ahead of the input. This is because, as we said before, the current

feeding the capacitor preceeds its voltage by 90∘. At extremely low frequencies, we’ve

established that the voltage across the capacitor is in phase with the input – but the

current preceeds that by 90∘. Therefore the voltage across the resistor must preceed the

voltage across the capacitor (and therefore the voltage across the input) by 90∘ (up to

).

).

Again, at fc, the voltage across the resistor is 45∘ away from the input, but this time it is ahead, not behind.

Finally, at fc*10 and above, the voltage across the resistor is in phase with the input. This all results in the phase response graph shown in Figure 2.21.

As you can see in Figure 2.21, the voltage across the resistor and the voltage across the capacitor are always 90∘ out of phase with each other, but their relationships with the input voltage change.

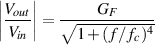

There’s only one thing left that we have to discuss... this is an apparent conflict in what we have learned (though it isn’t really a conflict...) We know that the fc is the point where the voltage across the capacitor and the voltage across the resistor are both -3 dB relative to the input. Therefore the two voltages are equal – yet, when we add them together, we go up by 3 dB and not 6 dB as we would expect. This is because the two waves are 90∘ apart – if they were in phase, they would add to produce a gain of 6 dB. Since they are out of phase by 90∘, their sum is 3 dB.

We know that the voltage across the capacitor and the voltage across the resistor are always 90∘ apart at all frequencies, regardless of their phase relationships to the input voltage.

Consider the Resistance and the Capacitive reactance as both providing components of the impedance, but 90∘ apart. Therefore, we can plot the relationship between these three using a right triangle as is shown in Figure 2.23.

At this point, it should be easy to see why the impedance is the square root of the sum of the squares of R and XC. In addition, it becomes intuitive that, as the frequency goes to ∞ Hz, XC goes to zero and the hypotenuse of the triangle, Z, becomes the same as R. If the frequency goes to 0 Hz (DC), XC goes to ∞Ω as does Z.

Go back to the concept of a voltage divider using two resistors. Remember that the ratio of the two resistances is the same as the ratio of the voltages across the two resistors.

| (2.91) |

If we consider the RC circuit in Figure 2.17, we can treat the two components in a similar manner, however the phase change must be taken into consideration. Figure 2.23 shows a triangle exactly the same as that in Figure 2.22 – now showing the relationship bewteen the input voltage, and the voltages across the resistor and the capacitor.

So, once again, we can see that, as the frequency goes up, the voltage across the capacitor goes down until, at ∞ Hz, the voltage across the cap is 0 V and VIN = VR.

Notice as well that this triangle gives us the phase relationships of the voltages. The voltage across the resistor and the capacitor are always 90∘ apart, but the phase of these two voltages in relation to the input voltage changes according to the value of the capacitive inductance which is, in turn, determined by the capacitance and the frequency.

So, now we can see that, as the frequency goes down, the current goes down, the voltage across the resistor goes down, the voltage across the capacitor approaches the input voltage, the phase of the low-pass filter approaches 0∘ and the phase of the high-pass filter approaches 90∘. As the frequency goes up, the voltage across the capacitor goes down, the voltage across the resistor appraoches the input voltage, the phase of the low-pass filter approaches 90∘ and the phase of the high-pass filter approaches 0∘.

Once upon a time, you did an experiment, probably around grade 3 or so, where you put a piece of paper on top of a bar magnet and sprinkled iron filings on the paper. The result was a pretty pattern that spread from pole to pole of the magnet. The iron filings were aligning themselves along what are called magnetic lines of force. These lines of force spread out around a magnet and have some effect on the things around them (like iron filings and compasses for example...) These lines of force have a direction – they go from the north pole of the magnet to the south pole as shown in Figures 2.24 and 2.25.

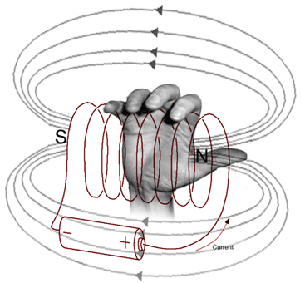

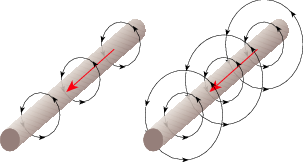

It turns out that there is a relationship between current in a wire and magnetic lines of force. If we send current through a wire, we generate magentic lines of force that rotate around the wire. The more current, the more the lines of force expand out from the wire. The direction of the magnetic lines of force can be calculated using what is probably the first calculator you ever used... your right hand... Look at Figure 2.26. As you can see, if your thumb points in the direction of the current and you wrap your fingers around the wire, the direction your fingers wrap is the direction of the magnetic field. (You may be asking yourself “so what!?’ – but we’ll get there...)

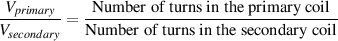

Let’s then, take this wire and make a spring with it so that the wire at one point in the section of spring that we’ve made is adjacent to another point on the same wire. The direction of the magnetic field in each section of the wire is then reinforced by the direction of the adjacent bits of wire and the whole thing acts as one big magnetic field generator. When this happens, as you can see below, the coil has a total magnetic field similar to the bar magnet in the diagram above.

We can use our right hand again to figure out which end of the coil is north and which is south. If you wrap your fingers around the coil in the direction of the current, you will find that your thumb is pointing north, as is shown in Figure 2.27. Remember again, that, if we increase the current through the wire, then the magnetic lines of force move farther away from the coil.

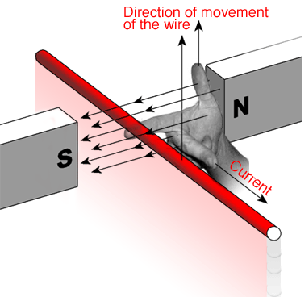

One more interesting relationship between magnetism and current is that if we move a wire in a magnetic field, the movement will create a current in the wire. Essentially, as we cut through the magnetic lines of force, we cause the electrons to move in the wire. The faster we move the wire, the more current we generate. Again, our right hand helps us determine which way the current is going to flow. If you hold your hand as is shown in Figure 2.28, point your index finger in the direction of the magnetic lines of force (N to S...) and your thumb in the direction of the movement of the wire relative to the lines of force, your middle finger will point in the direction of the current.

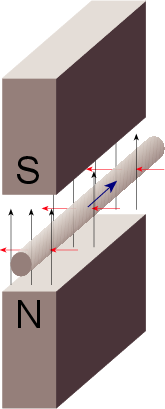

We saw in Section 2.6 that if you have a piece of wire moving through a magnetic field, you will induce current in the wire. The direction of the current is dependent on the direction of the magnetic lines of force and the direction of movement of the wire. Figure 2.29 shows an example of this effect.

We also saw that the reverse is true. If you have a piece of wire with current running through it, then you create a magnetic field around the wire with the magnetic lines of force going in circles around it. The direction of the magnetic lines of force is dependent on the direction of the current. The strength of the magnetic field and, therefore, the distance it extends from the wire is dependent on the amount of current. An example of this is shown in Figure 2.30 where we see two different wires with two different magnetic fields due to two different currents.

What happens if we combine these two effects? Let’s take a piece of wire and connect it to a circuit that let’s us have current running through it. Then, we’ll put a second piece of wire next to the first piece as is shown in Figure 2.31. Finally, we’ll increase the current over time, so that the magnetic field expands outwards from the first wire. What will happen? The magnetic lines of force will expand outwards from around the first wire and cut through the second wire. This is essentially the same as if we had a constant magnetic field between two magnets and we moved the wire through it – we’re just moving the magnetic field instead of the wire. Consequently, we’ll induce a current in the second wire.

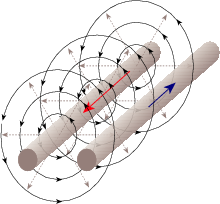

Now let’s go a step further and put a current through the wire on the right that is always changing – the most common form of this signal in the electrical world is a sinusoidal waveform that alternates back and forth between positive and negative current (meaning that it changes direction). Figure 2.32 shows the result when we put an everyday AC signal into the wire on the left in Figure 2.31.

Let’s take a piece of wire and wind it into a coil consisting of two turns as is shown in Figure 2.33. One thing to beware of is that we aren’t just wrapping naked wire in a coil - we have to make sure that adjacent sections of the wire don’t touch each other, so we insulate the wire using a thin insulation.

Let’s now put that coil in a circuit where it’s in series with a resistor, a voltage supply and a switch. We’ll also put probes in across the coil to see what the voltage difference across it is. This circuit will look like Figure 2.34.

Now think about Figure 2.31 as being just the top two adjacent sections of wire in the coil in Figure 2.33. This should raise a question or two. As we saw in Figure 2.31, increasing the current in one of the wires results in a current in the other wire in the opposite direction. If these two wires are actually just two sections of the same coil of wire, then the current we’re putting through the coil goes through the whole length of wire. However, if we increase that current, then we induce a current in the opposite direction on the adjacent wires in the coil, which, as we know, is the same wire. Therefore, by increasing the current in the wire, we increase the induced current pushing in the opposite direction, opposing the current that we’re putting in the wire. This opposing current results in a measurable voltage difference across the coil that is called back electromotive force or back EMF. This back EMF is is proportional to the change (and thefore the slope, if we’re looking at a graph) in the current, not the current itself, since it’s proportional to the speed at which the wire is cutting through the magnetic field. Therefore, the amount that the coil (which we’ll now start calling an inductor because we are inducing a current in the opposite direction), opposes the change in voltage applied to it is proportional to the frequency, since the higher the frequency, the faster the change in current.

Armed with this knowledge, let’s think about the circuit in Figure 2.34. Before we close the switch, there can’t be any current going through the circuit, because it’s not a circuit... there’s a break in the loop. Then, we close the switch. There is an instantaneous change in current – or at least there should be, because there is an instantaneous change in voltage. However, we know that the inductor opposes a change in current – and that the faster the change, the more it opposes it. Since we closed a switch, we changed the current infinitely fast (from nothing to something in 0 seconds..) so the inductor opposes the change with an amount equal to the amount that the current should have changed. Therefore, nothing happens. For an instant, no current flows through the system because the inductor becomes a generator pusing current in the opposite direction.

An instant after this, the voltage has not changed (because the source is DC) therefore, the attempted current has not changed from the new value, so the inductor thinks that there has been no change and it opposes the current a little less. As we get further and further in time from the moment when we close the switch, the inductor forgets more and more that a change has happened, and pushes current in the opposite direction less and less, until, finally, it stops pushing back at all (because we have a constant current, and the magnetic lines of force are not moving any more) and therefore no back EMF is being generated.

What I just described is plotted as the red line in Figure 2.35

Okay, that looks after the current but what about the voltage difference across the inductor? Well, we know that if there is no current going through the inductor, then there is no current going through the resistor. If there is no current going through the resistor, then there is no voltage difference across it because V = IR. Therefore, when we first close the switch, all of the voltage of the voltage supply is across the inductor because there is no difference across the resistor. As we get further and further in time away from the moment the switch closed, then there is more and more current going through the resistor, therefore there is more and more voltage across it, so there is less and less voltage difference across the inductor. Eventually, the inductor stops pushing back against the flow of current, so the current just sees the inductor as a piece of wire, therefore, eventually, all of the voltage drop is across the resistor and there is no voltage difference across the inductor.

This behaviour is shown as the blue line in Figure 2.35.

You may have already noticed that this graph in Figure 2.35 looks remarkably similar to the one in Figure 2.13. You’d be right. Notice that the current rises 63.6% in 1 time period, and that we reach maximum current and 0 voltage difference after 5 time constants. Notice, however, that a capacitor opposes a change in voltage whereas an inductor opposes a change in current.

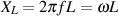

This effect of the inductor opposing a change in current generates something called inductive reactance, abbreviated XL which is measured in Ω. It’s similar to capacitive reactance in that it opposes a change in the signal without consuming power. This time, however, the roles of current and voltage are reversed in that, when we apply a change in current to the inductor, the current through it changes slowly, but the voltage across it changes quickly.